OpenAI Opens GPT-5.5 Bio Bug Bounty to Test Frontier AI Safety Controls

OpenAI is inviting vetted red-teamers, security researchers, and biosecurity specialists to test whether GPT-5.5 can be universally jailbroken on five biology safety questions. The private bounty offers $25,000 for the first true universal jailbreak.

AWS Makes Frontier Agents Its Next Big Enterprise AI Bet

AWS is pitching frontier agents as the next phase of enterprise AI: systems that can work autonomously, at scale, and across long-running goals. The company is using Kiro, DevOps agents, security agents, and sustainability data projects to show where the model could land first.

Microsoft Commits $18 Billion to Australia's AI Infrastructure Push

Microsoft plans to spend $18 billion in Australia through 2029, expanding Azure capacity, cybersecurity partnerships, AI safety work, and workforce training. The deal is part of a broader global race to secure compute, policy alignment, and national AI capability.

OpenAI Launches ChatGPT Images 2.0 as a More Precise, Multilingual Visual Engine

OpenAI has launched ChatGPT Images 2.0 across ChatGPT, Codex, and the API, positioning image generation less as a novelty feature and more as a serious visual production system. The new model adds stronger instruction following, better text rendering, broader language support, flexible aspect ratios, and tighter links to reasoning workflows.

Anthropic Launches Claude Opus 4.7 With Stronger Coding, Sharper Vision, and New Cyber Guardrails

Anthropic has released Claude Opus 4.7 as a broad upgrade to Opus 4.6, pairing better software engineering and high-resolution vision with a new cyber safety layer meant to test how stronger models can be deployed without widening dangerous misuse.

OpenAI Unveils GPT-Rosalind, a Life Sciences Reasoning Model for Drug Discovery and Biology

OpenAI has introduced GPT-Rosalind, a new life sciences reasoning model aimed at biology, drug discovery, and translational medicine workflows. The launch pairs stronger scientific tool use with a trusted-access rollout for qualified enterprise research teams in the U.S.

Anthropic Appears to Be Requiring KYC Checks for Some Claude Max Users

Reports circulating on X suggest Anthropic has begun imposing mandatory KYC verification on some Claude Max users, raising fresh questions about identity checks, account sharing, and access controls in premium AI subscriptions.

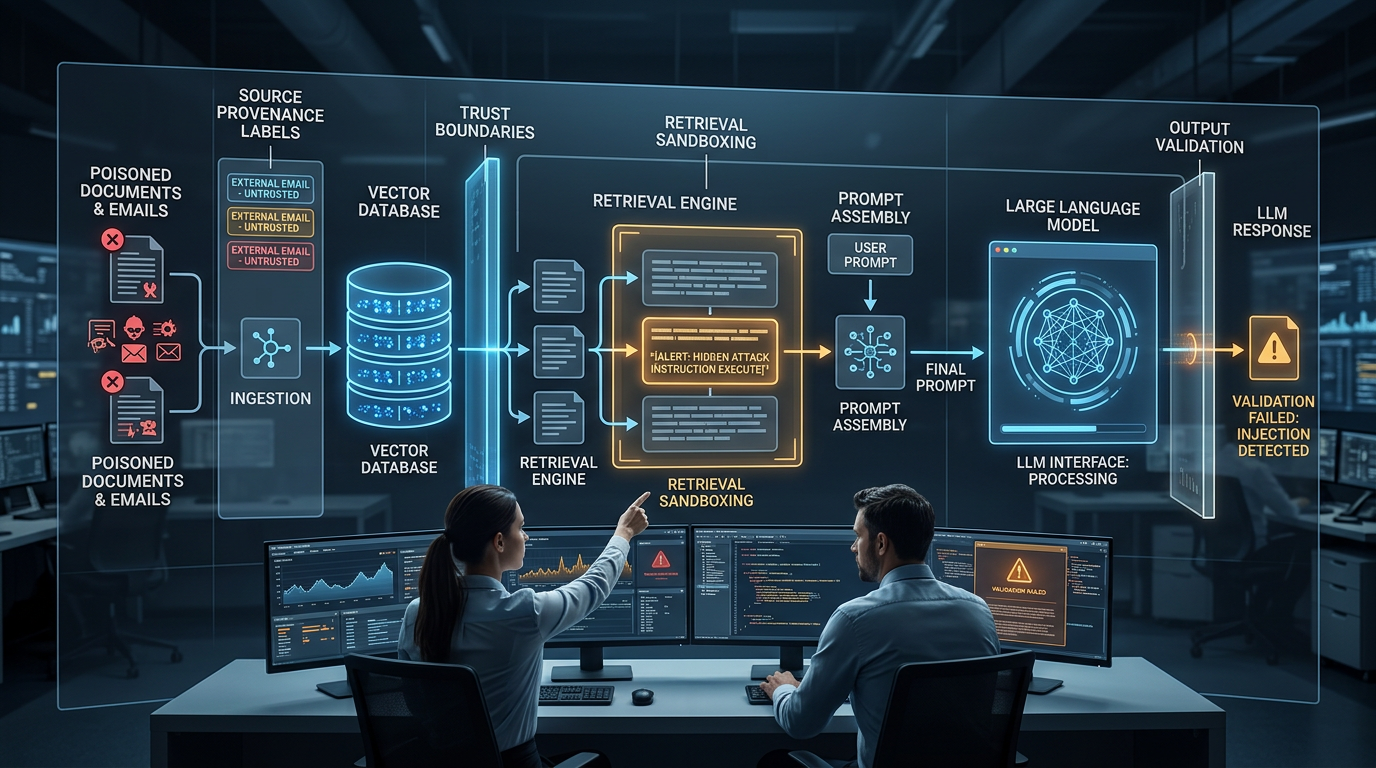

How Indirect Prompt Injection Exploits RAG Pipelines And 4 Controls That Actually Contain It

When your LLM retrieves documents, emails, or web pages to answer queries, every one of those sources is a potential injection vector. Here is how indirect prompt injection works inside RAG architectures and what technical controls reduce your exposure.

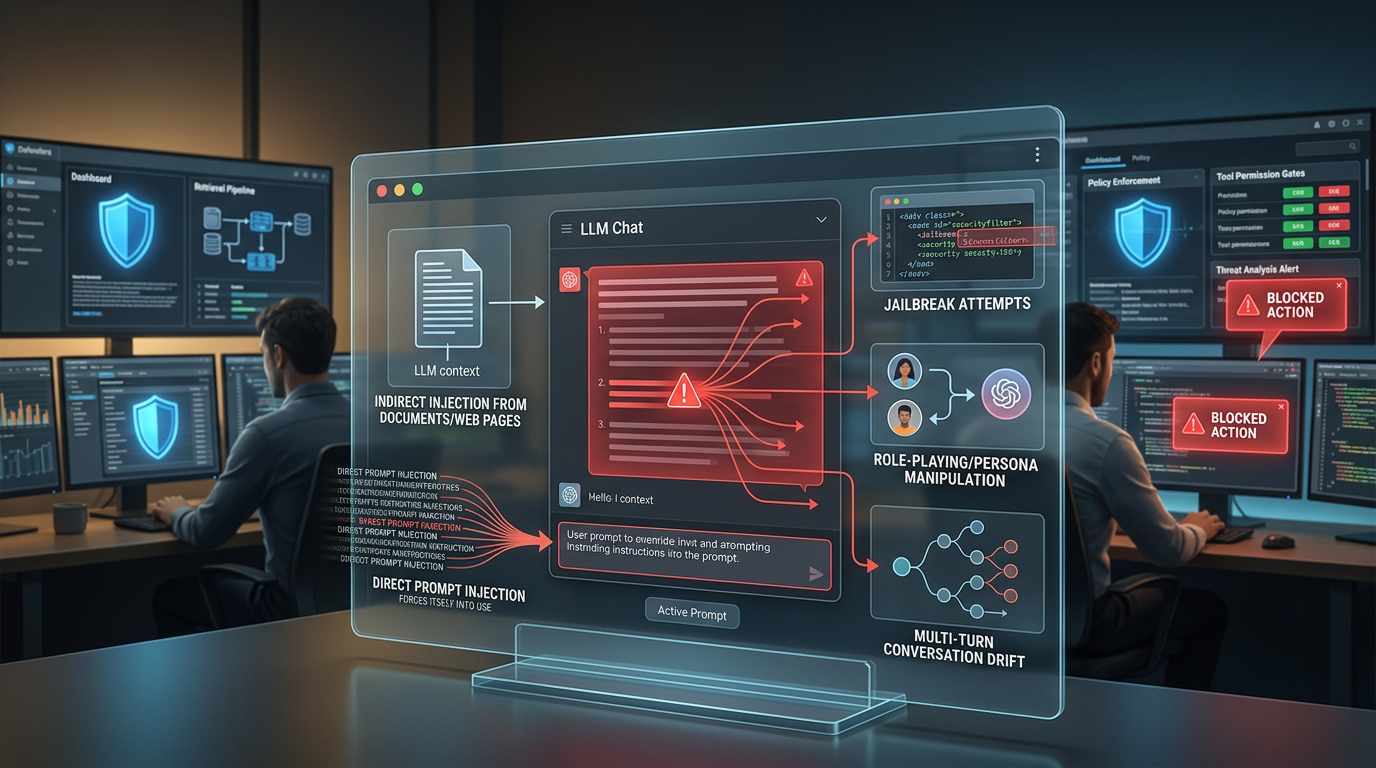

5 Types of Prompt Injection Attacks Targeting Deployed LLMs And How to Block Each One

Not all prompt injection attacks work the same way. This breakdown covers direct injection, indirect injection, jailbreaks, role-playing exploits, and multi-turn manipulation, with concrete defense controls for each attack type.

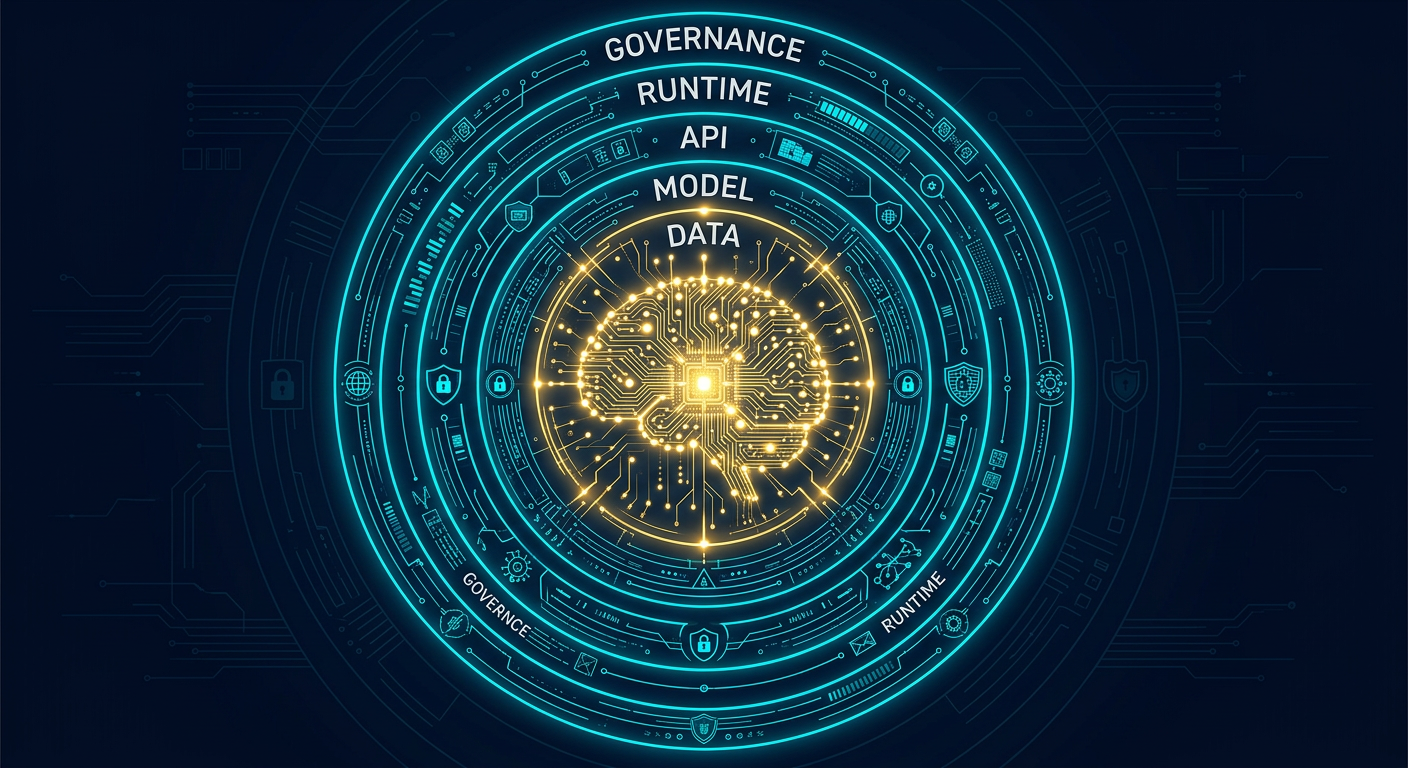

Best Practices To Secure AI Systems: A Comprehensive Guide for Every Team

AI systems introduce attack surfaces that traditional security frameworks were never built to handle. This guide covers every layer of AI security — from model training and API exposure to prompt injection, supply chain risk, and governance — with actionable steps for technical and non-technical teams alike.

OpenAI Abandons Its Erotic ChatGPT Mode — The Latest in a Week of Strategic Retreats

Adult mode is now indefinitely paused, joining Sora and Instant Checkout on the shelf as OpenAI executes a sweeping pivot away from side projects toward enterprise, coding, and a ChatGPT superapp.

OpenAI Launches Safety Bug Bounty Program to Reward Researchers Who Find AI Abuse Risks

OpenAI is opening a public Safety Bug Bounty program targeting AI-specific misuse scenarios — from agentic prompt injection to platform integrity bypasses — that fall outside traditional security vulnerability scopes.