Microsoft Commits $18 Billion to Australia's AI Infrastructure Push

Microsoft plans to spend $18 billion in Australia through 2029, expanding Azure capacity, cybersecurity partnerships, AI safety work, and workforce training. The deal is part of a broader global race to secure compute, policy alignment, and national AI capability.

Anthropic Launches Claude Opus 4.7 With Stronger Coding, Sharper Vision, and New Cyber Guardrails

Anthropic has released Claude Opus 4.7 as a broad upgrade to Opus 4.6, pairing better software engineering and high-resolution vision with a new cyber safety layer meant to test how stronger models can be deployed without widening dangerous misuse.

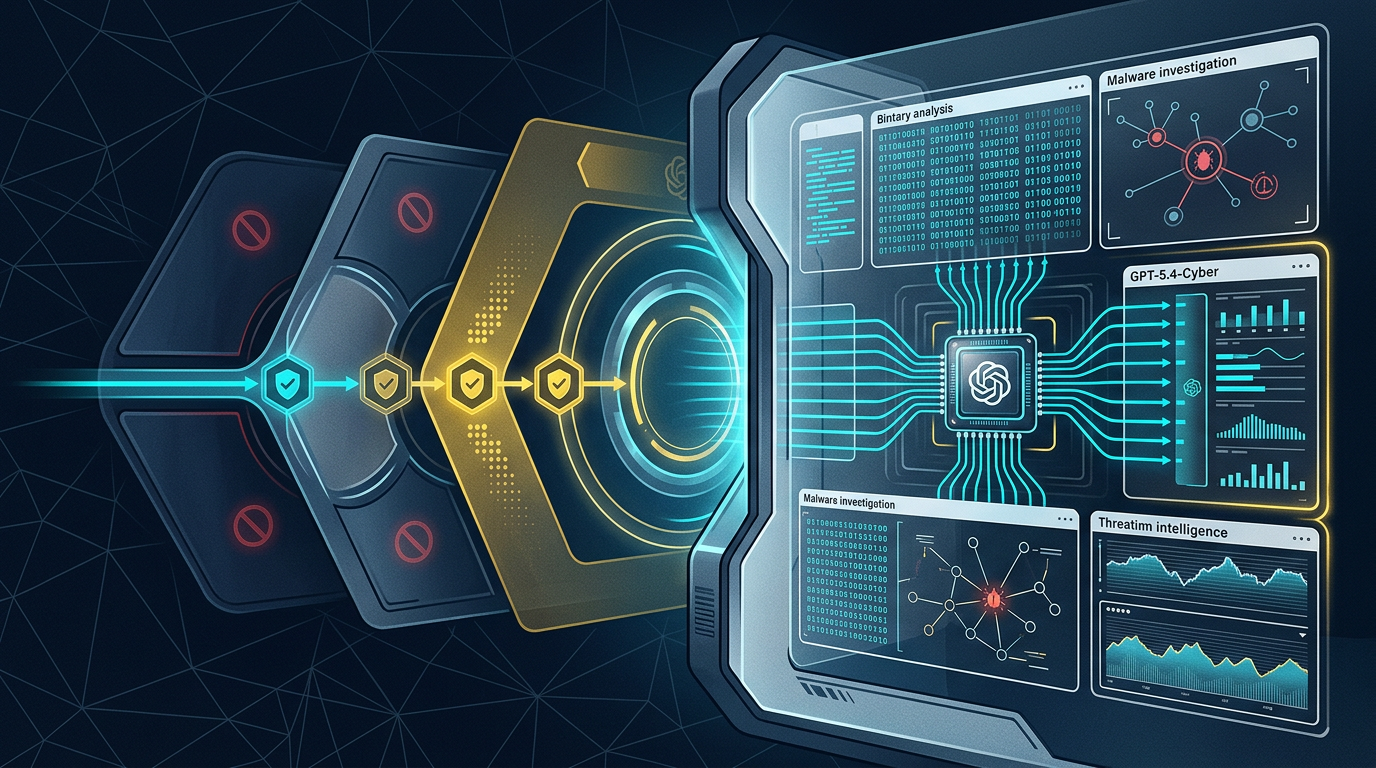

OpenAI Expands Trusted Cyber Access With GPT-5.4-Cyber for Verified Defenders

OpenAI is expanding its Trusted Access for Cyber program and introducing GPT-5.4-Cyber, a more permissive model for vetted security teams working on malware analysis, reverse engineering, and defensive cybersecurity tasks.

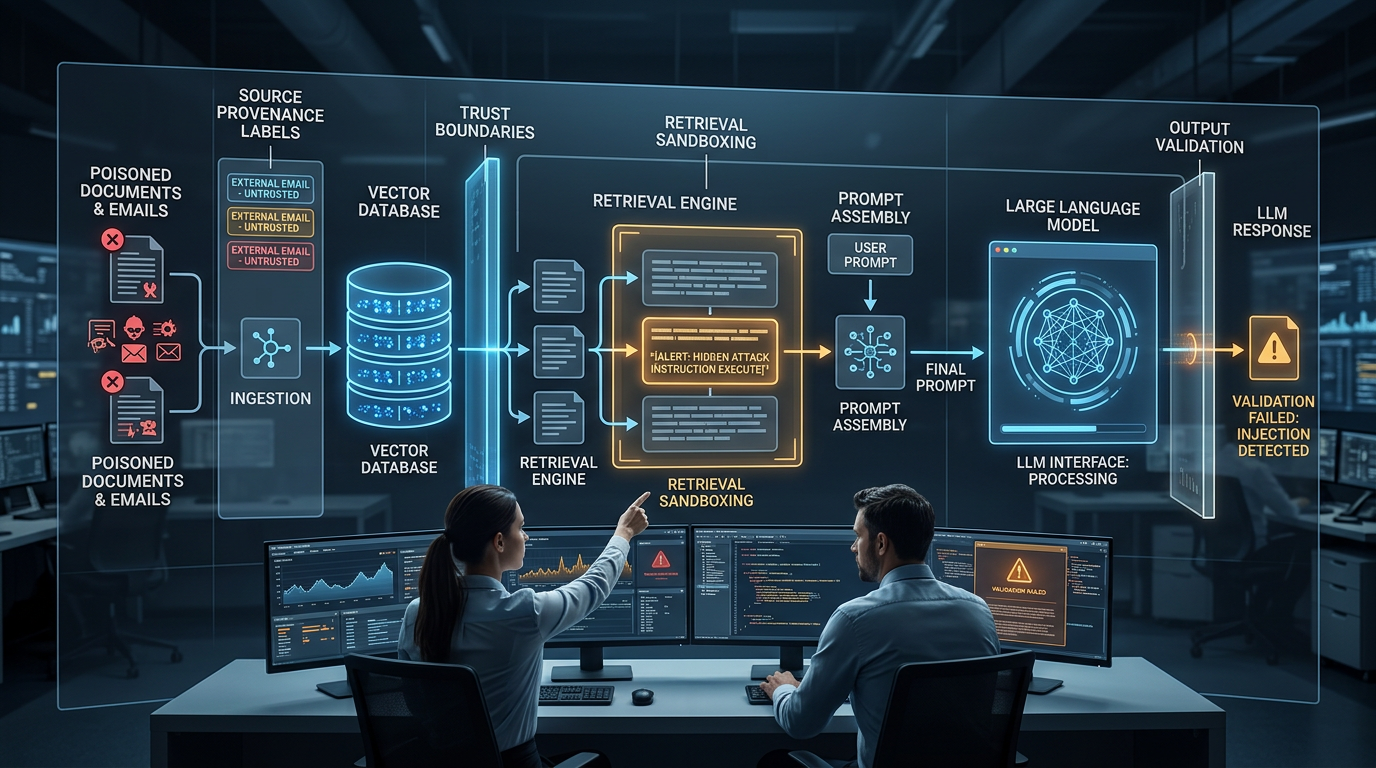

How Indirect Prompt Injection Exploits RAG Pipelines And 4 Controls That Actually Contain It

When your LLM retrieves documents, emails, or web pages to answer queries, every one of those sources is a potential injection vector. Here is how indirect prompt injection works inside RAG architectures and what technical controls reduce your exposure.

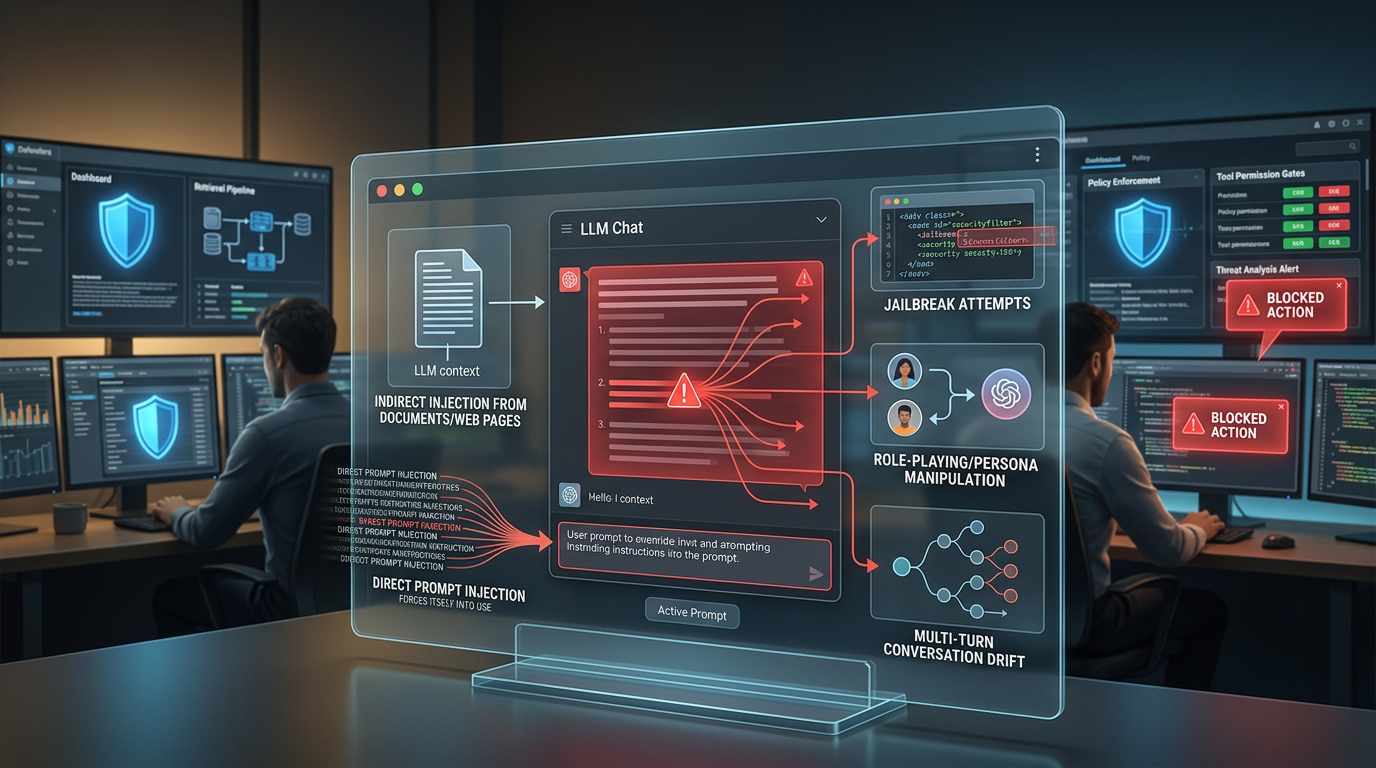

5 Types of Prompt Injection Attacks Targeting Deployed LLMs And How to Block Each One

Not all prompt injection attacks work the same way. This breakdown covers direct injection, indirect injection, jailbreaks, role-playing exploits, and multi-turn manipulation, with concrete defense controls for each attack type.

Anthropic Unveils Project Glasswing to Put Frontier AI on Cyber Defense Duty

Anthropic has launched Project Glasswing, a new initiative built around Claude Mythos Preview to help secure critical software before advanced AI systems make cyberattacks easier to scale. The company is framing it as a defense-first response to rapidly improving AI vulnerability research.

Microsoft Commits $10 Billion to AI and Cybersecurity in Japan, Pledging to Train a Million Engineers by 2030

Microsoft has announced a $10 billion investment in Japan covering cloud and AI infrastructure, national cybersecurity, and workforce development — the largest in a series of major AI commitments across Asia made within a single week.

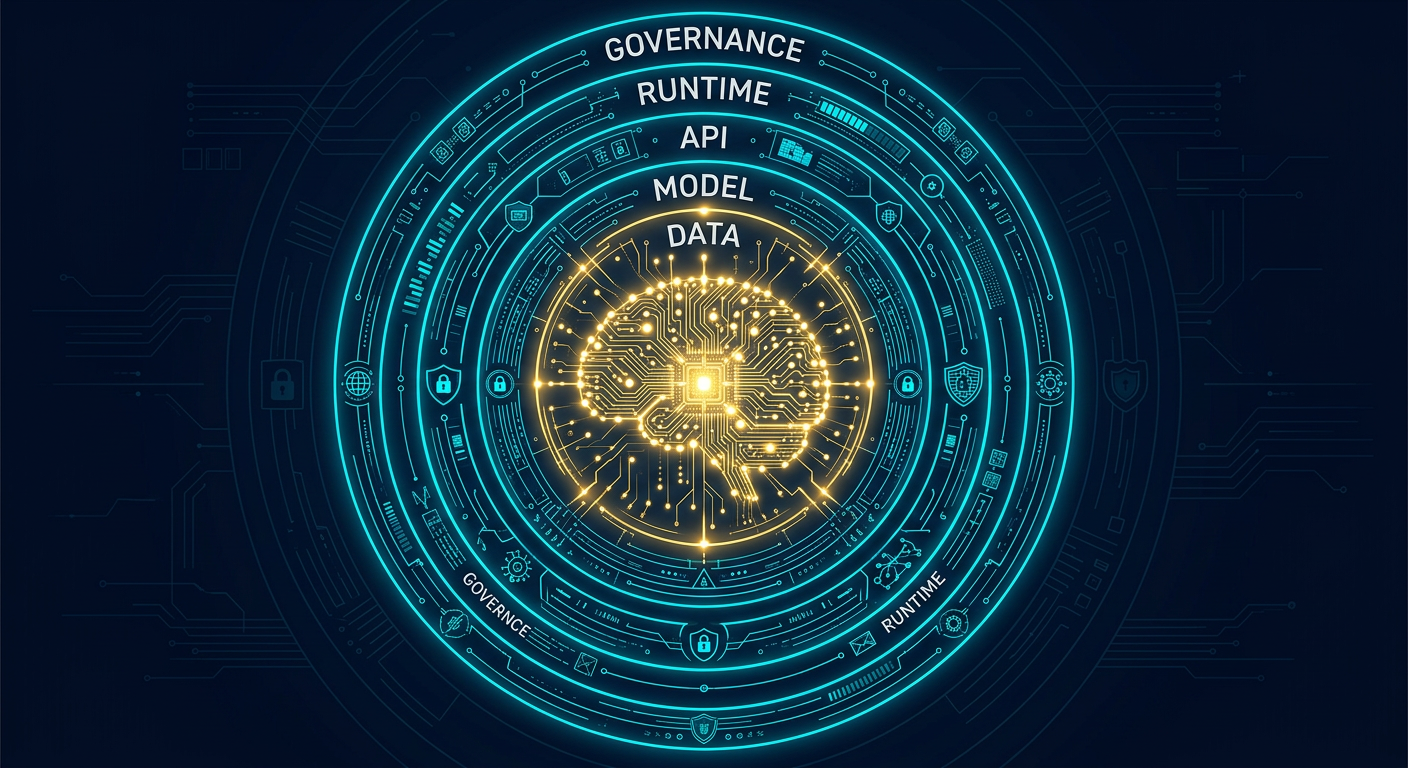

Best Practices To Secure AI Systems: A Comprehensive Guide for Every Team

AI systems introduce attack surfaces that traditional security frameworks were never built to handle. This guide covers every layer of AI security — from model training and API exposure to prompt injection, supply chain risk, and governance — with actionable steps for technical and non-technical teams alike.

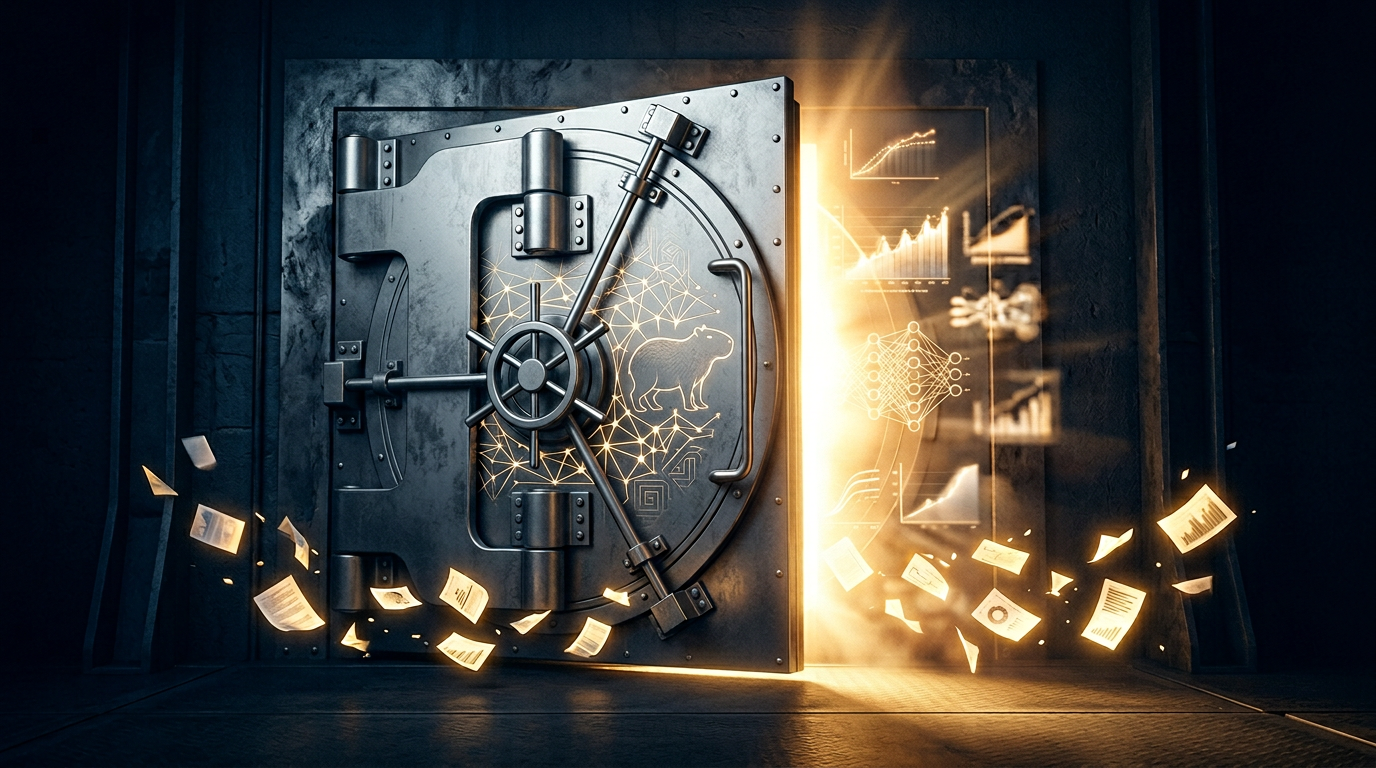

Claude Mythos Leaked: Anthropic's Most Powerful Model Yet Poses 'Unprecedented Cybersecurity Risk'

A CMS misconfiguration exposed nearly 3,000 internal Anthropic assets, including a draft blog post describing Claude Mythos — a new model tier above Opus that the company itself warns is 'far ahead of any other AI model in cyber capabilities.' Anthropic has confirmed the model exists.

Claude Code Leaks Its Own Source Code for the Second Time in a Year via npm Source Maps

A 60MB source-map file included in Claude Code v2.1.88 exposed 1,906 proprietary TypeScript source files on the public npm registry — the same packaging oversight that struck Anthropic in February 2025.

Databricks Acquires Two Startups to Power Its New AI-Driven Security Product

Flush with capital from a $5 billion raise, Databricks is moving into enterprise security with Lakewatch, a new SIEM platform backed by Claude and two quiet acquisitions.

OpenAI Launches Safety Bug Bounty Program to Reward Researchers Who Find AI Abuse Risks

OpenAI is opening a public Safety Bug Bounty program targeting AI-specific misuse scenarios — from agentic prompt injection to platform integrity bypasses — that fall outside traditional security vulnerability scopes.