Anthropic is not presenting Claude Opus 4.7 as a routine model refresh. The company is framing it as a more dependable agentic system for hard software work, with stronger coding performance, much better image understanding, and a new safety posture for cybersecurity tasks that Anthropic clearly sees as a live deployment problem rather than a distant risk.

That combination is what makes this release important. Opus 4.7 is both a capability upgrade and a policy test: a stronger model that Anthropic is willing to ship broadly, but only with tighter controls around one of the most sensitive domains frontier labs now face.

Anthropic Is Selling Reliability More Than Raw Hype

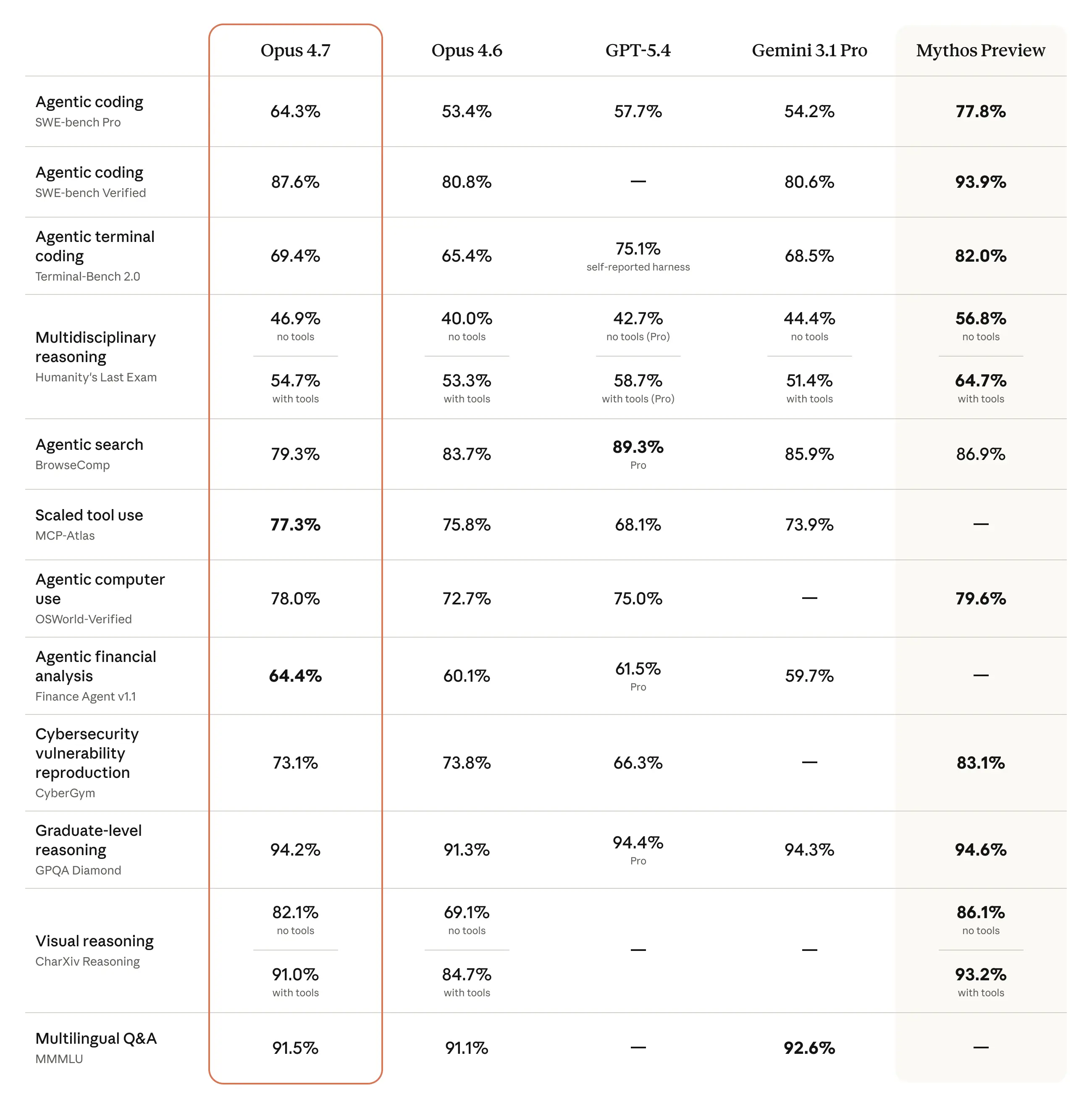

According to Anthropic’s launch post, the central improvement over Opus 4.6 is in advanced software engineering, especially on difficult, long-running tasks that previously needed more human supervision. That is a meaningful claim because the frontier model race is increasingly less about who wins a headline benchmark and more about which system can stay coherent across long task chains, follow instructions precisely, and recover from complexity without drifting off course.

Anthropic says early users found Opus 4.7 more trustworthy for hard coding assignments, with stronger rigor and better self-verification before returning answers. If that holds up in production, it matters well beyond developer tooling. Enterprise buyers care less about whether a model is charming in a demo and more about whether it can operate inside real workflows without creating review debt at every turn.

The company is also emphasizing that Opus 4.7 interprets instructions more literally than earlier versions. That sounds like a minor prompt-engineering detail, but it has practical consequences. Teams that built prompts and evaluation harnesses around the looser behavior of older Claude models may find that the same wording now produces narrower, stricter, or simply different outputs. Anthropic is effectively warning users that better instruction following can break old assumptions just as easily as it improves new tasks.

Vision and Multimodal Work Get a Serious Upgrade

Another major part of the release is vision. Anthropic says Opus 4.7 can now handle images up to 2,576 pixels on the long edge, more than tripling the visual detail budget available to prior Claude models. That matters for far more than generic image analysis. High-resolution perception is especially useful in the kinds of workflows Anthropic increasingly wants Claude to own: reading dense screenshots, extracting information from complicated charts, and working against visual interfaces where tiny details can change the meaning of a task.

For agentic systems, better vision is not a cosmetic feature. It expands what the model can reliably perceive in operating environments that are still built for humans. A coding agent that can read cluttered dashboards, inspect design mockups, and parse complex interface states without dropping details is a different kind of enterprise tool than one that mostly lives in plain text.

Source: Anthropic

Source: Anthropic

Anthropic is also tying those capability gains to professional output quality. The company says Opus 4.7 is more tasteful and creative in applied work such as interfaces, documents, and presentations. That is easy marketing language to overuse, but here it fits a broader pattern: frontier labs are now competing on whether models can produce work products that are not just correct enough, but polished enough to survive internal review.

The Cybersecurity Story Connects Directly to Glasswing

The most strategically revealing section of this release is not about coding or vision. It is the cyber safety layer. Anthropic says Opus 4.7 is the first model released under the framework it described when announcing Project Glasswing, its initiative to use more advanced AI systems for defensive cybersecurity while delaying broader rollout of its most cyber-capable frontier models.

That makes Opus 4.7 a kind of intermediate deployment vehicle. Anthropic says the model is less capable in cybersecurity than Claude Mythos Preview and that it experimented during training with reducing those capabilities. At the same time, the company is shipping Opus 4.7 with automated safeguards that detect and block requests indicating prohibited or high-risk cyber use.

This matters because Anthropic is not treating cyber misuse as a simple moderation problem anymore. It is treating it as a staged deployment problem. First comes a model that is useful, but not the strongest in the lab. Then comes automated enforcement in real-world conditions. Then, if those systems work, Anthropic learns enough to think about broader release for models with more dangerous cyber capabilities.

That logic lines up cleanly with the Glasswing thesis. In that earlier initiative, Anthropic argued that advanced models should be put to work securing critical software before similar capabilities become easier for attackers to operationalize. Opus 4.7 extends that same logic into the public product line: stronger models can ship, but only with tighter cyber boundaries and more structured pathways for legitimate use.

For security professionals, Anthropic is also opening a more formal access path. The company says researchers working on legitimate tasks such as red-teaming, vulnerability research, and penetration testing can apply through its Cyber Verification Program. That is an important signal to the market. Anthropic wants to be seen not as blocking cyber work wholesale, but as separating trusted defensive use from broad, lightly governed access.

Availability, Pricing, and the Push Toward Agentic Control

Anthropic says Opus 4.7 is available across Claude products, the API, Amazon Bedrock, Google Cloud Vertex AI, and Microsoft Foundry, while keeping pricing unchanged from Opus 4.6 at $5 per million input tokens and $25 per million output tokens. That stability matters because it removes one common obstacle to upgrade decisions. Anthropic is asking customers to absorb workflow changes and potentially higher token counts, but not a direct list-price increase.

There are, however, still operational tradeoffs. Anthropic says Opus 4.7 uses an updated tokenizer that can turn the same input into roughly 1.0x to 1.35x more tokens depending on content type. It also says the model tends to think more at higher effort levels, especially in longer agentic runs. Both shifts can raise output usage even while improving reliability.

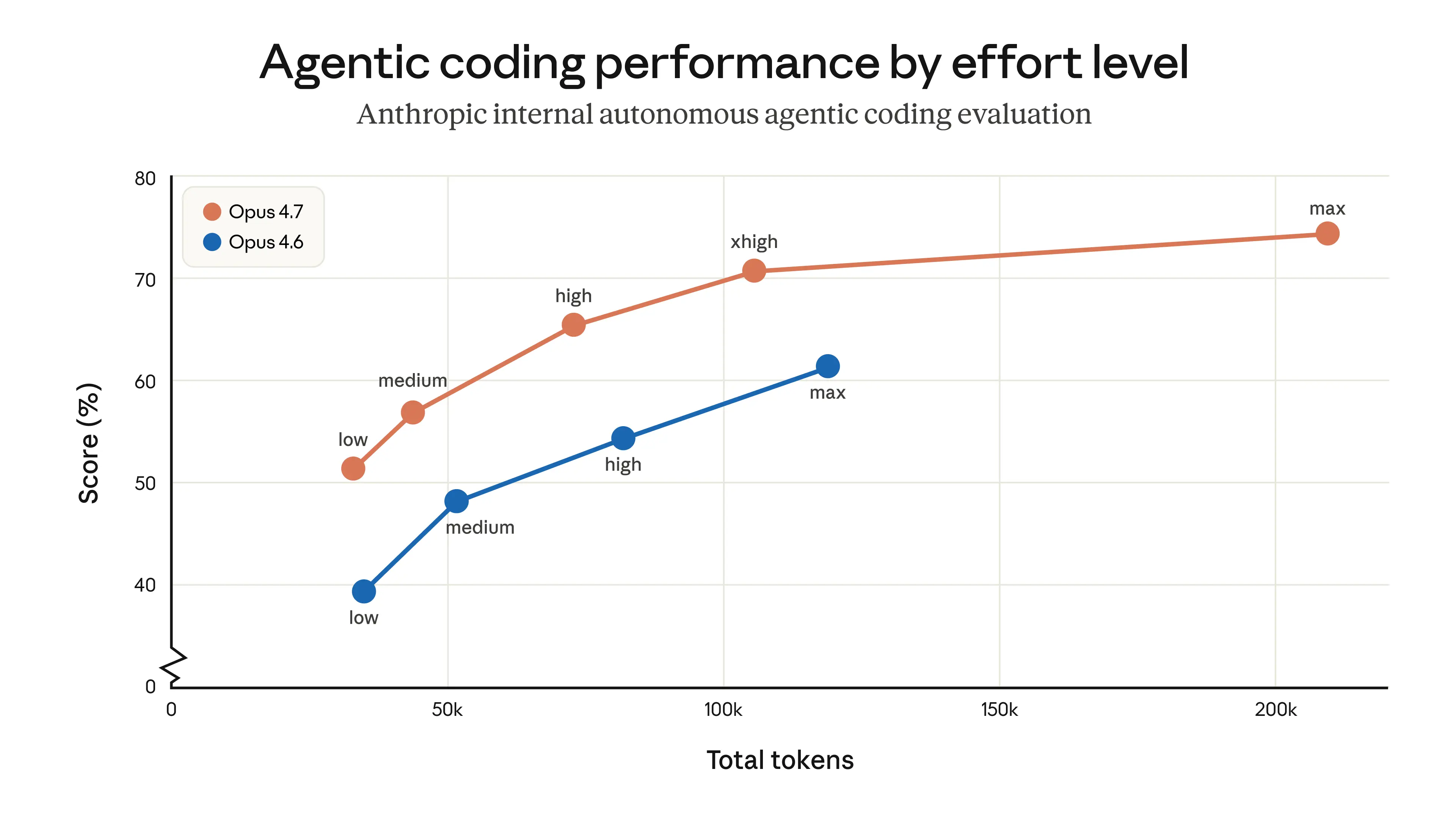

That is why the company is pairing the release with more controls. Opus 4.7 introduces a new xhigh effort tier between high and max, giving developers finer control over the tradeoff between reasoning depth and latency. Anthropic is also launching task budgets in public beta on the platform side, a sign that the company increasingly sees agentic model usage as something to allocate and govern over time rather than simply invoke per request.

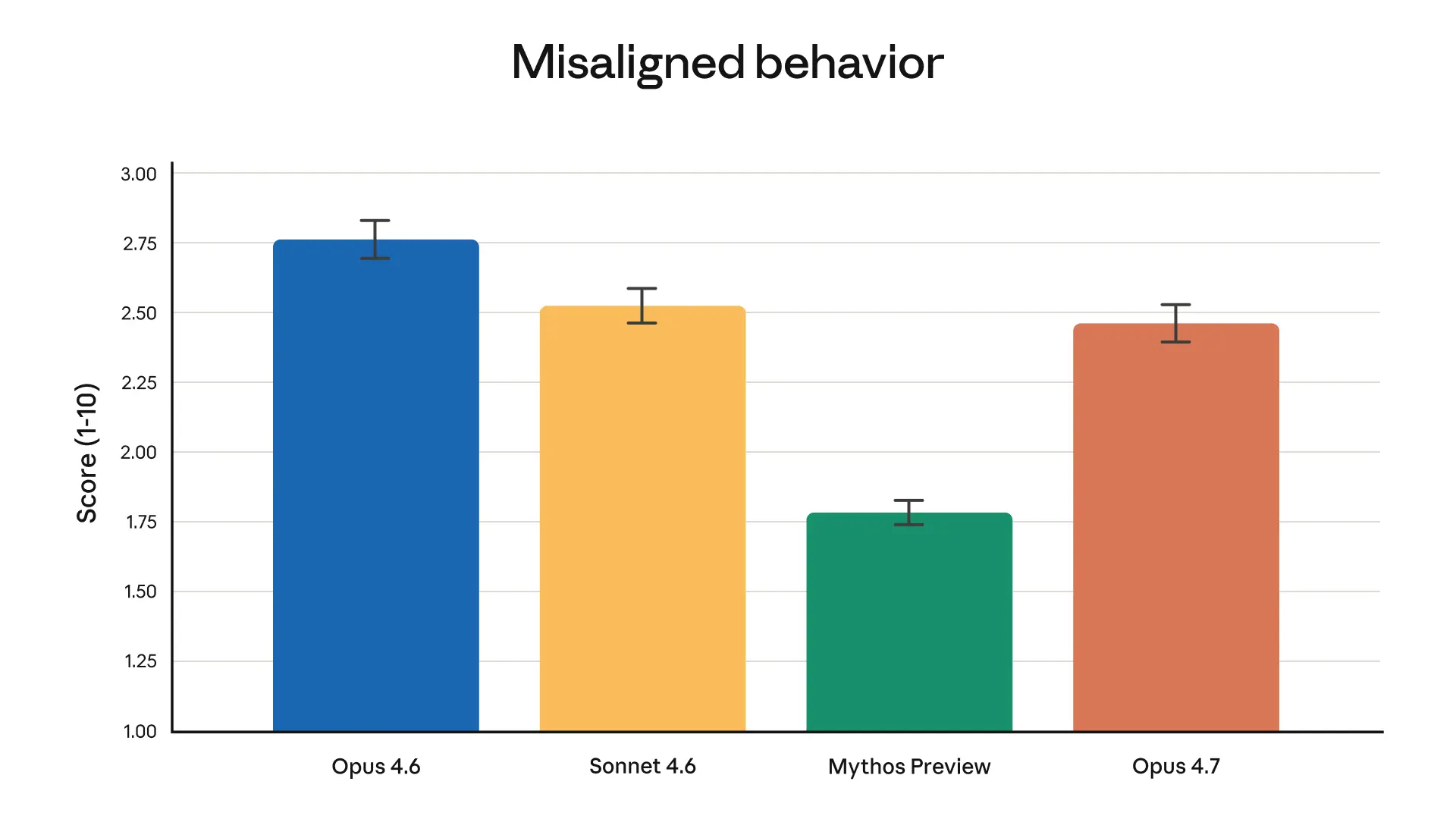

Overall misaligned behavior scores from Anthropic’s automated behavioral audit show Claude Opus 4.7 improving modestly over Opus 4.6 and Sonnet 4.6, while Mythos Preview still records the lowest rate of concerning behavior.

Overall misaligned behavior scores from Anthropic’s automated behavioral audit show Claude Opus 4.7 improving modestly over Opus 4.6 and Sonnet 4.6, while Mythos Preview still records the lowest rate of concerning behavior.

Source: Anthropic

The same pattern appears in the product updates around Claude Code. Anthropic is raising the default effort level to xhigh for all plans, adding task-budget controls on the API, and introducing /ultrareview, a dedicated review mode designed to catch bugs and design issues more like a careful human reviewer would. Taken together, those additions say something clear about where the company thinks value is moving: toward longer-running AI systems that need better supervision knobs, not just smarter base models.

The Bigger Question Is Whether Enterprises Accept the Tradeoff

Opus 4.7 looks like a serious upgrade, but it is also a compact summary of the frontier AI bargain now being offered to enterprises. In exchange for stronger coding, better multimodal perception, and more autonomous work, customers need to accept models that may consume tokens differently, interpret instructions more literally, and operate behind increasingly dynamic safety and verification systems.

Anthropic says Claude Opus 4.7 delivers stronger scores on an internal agentic coding evaluation across effort settings, though the company notes those results come from autonomous single-prompt runs and may not match interactive coding usage exactly.

Anthropic says Claude Opus 4.7 delivers stronger scores on an internal agentic coding evaluation across effort settings, though the company notes those results come from autonomous single-prompt runs and may not match interactive coding usage exactly.

Source: Anthropic

That does not make the release weaker. If anything, it makes it more credible. Anthropic is not pretending Opus 4.7 is free performance. It is positioning the model as a more capable but more governable system, one that fits the reality that agentic AI is now judged on supervision, safety boundaries, and operational control as much as raw intelligence.

The near-term takeaway is straightforward: Claude Opus 4.7 is a notable model upgrade for enterprises that want better coding and richer multimodal capability today. The longer-term takeaway is even more important. Anthropic is using this launch to rehearse how future, more sensitive models may reach the market at all.

Comments

No comments yet. Be the first to share your thoughts.

Sign in or create an account to leave a comment.