Why this argument now

The 'AI will take your job' narrative has become ambient noise in the 2025-2026 labor market. What has received less attention is who amplifies it, how it functions as a management instrument, and what the actual macroeconomic evidence shows. The Yale Budget Lab found no clear relationship between AI exposure and unemployment through early 2026. Deutsche Bank analysts warned that AI redundancy washing 'will be a significant feature of 2026.' EPI economists have argued for years that the real threat to workers is power imbalance, not technology. This essay assembles the case that the fear, while grounded in something real, is being amplified in ways that serve specific interests — and that workers deserve a more precise analysis.

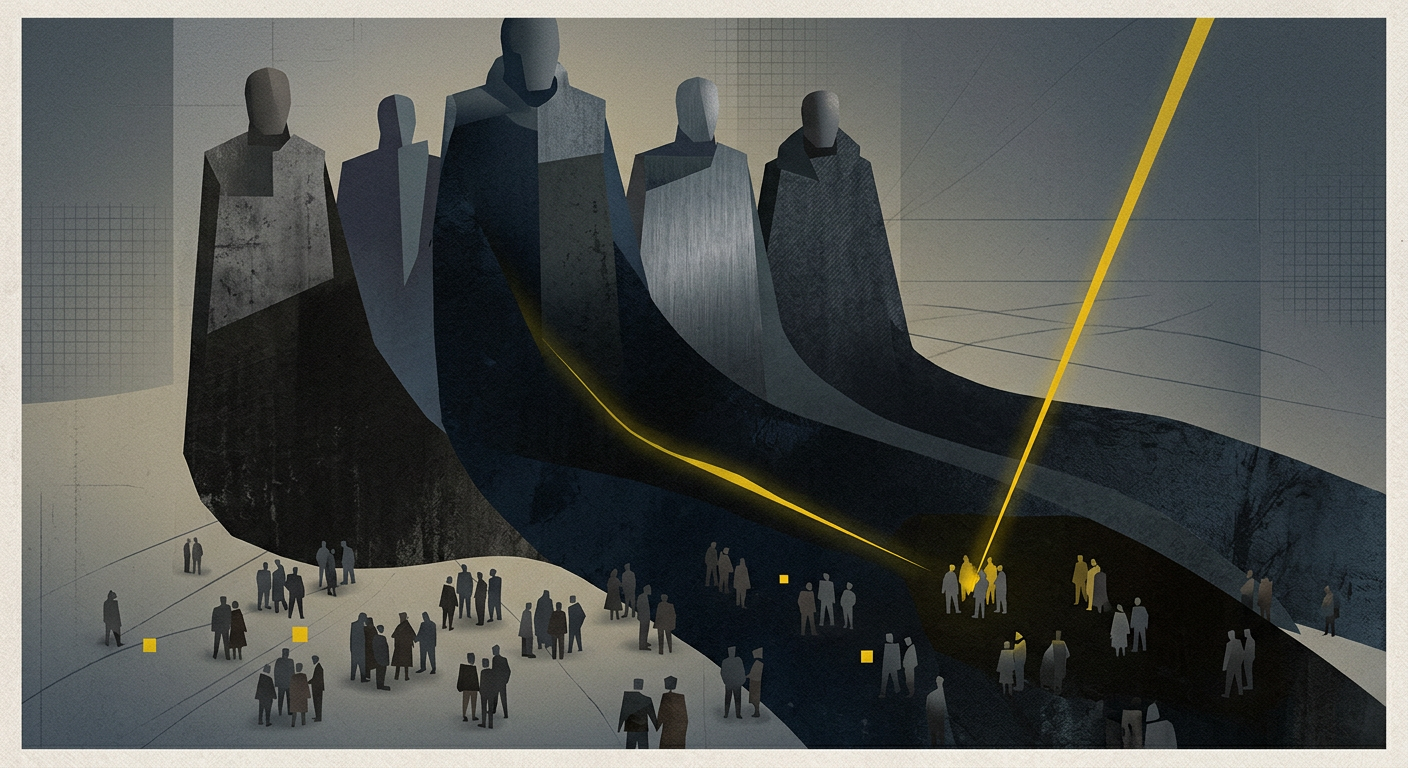

The message has been delivered so many times and from so many directions that it has acquired the texture of fact: AI is coming for your job. The press releases say it. The investor calls say it. The conference keynotes say it. CEOs say it when announcing layoffs and again when hiring freezes. Analysts say it when projecting labor cost savings. The fear is now so ambient, so continuously refreshed by new headlines, that questioning it feels naive — like doubting gravity.

But the feeling that something is inevitable and the evidence that it is already happening are different things. And the gap between them — between the fear that is real and the displacement that is not yet showing up in the data at the scale the narrative implies — is where this essay wants to spend time. Not to dismiss the long-term question of what AI does to work, which is genuinely open. But to ask a different question: who benefits from keeping that fear at maximum volume right now, before the evidence has resolved?

The answer is not a conspiracy. It is a structural interest. And it has a history.

What the Data Actually Shows

The most carefully maintained public record of AI’s actual impact on the U.S. labor market belongs to the Yale Budget Lab, which has been tracking the relationship between AI exposure and employment outcomes since ChatGPT’s launch in late 2022. Yale Budget Lab findings through early 2026 show no clear relationship between occupational AI exposure and unemployment duration, no acceleration in the rate of occupational mix change since ChatGPT’s release, and no meaningful upward trend in AI-exposed workers appearing among the unemployed. In the executive director’s own words: “No matter which way you look at the data, at this exact moment, it just doesn’t seem like there’s major macroeconomic effects here.”

New evidence from the St. Louis Federal Reserve points in the same direction. AEI analysis of surveys across the US and Europe in 2025 and 2026 found no clear evidence that AI adoption was associated with job gains or losses at the industry level — and noted that higher-adoption sectors are also seeing faster productivity growth on both sides of the Atlantic. A separate Federal Reserve analysis combining job-postings data with the Census Bureau’s Business Trends and Outlook Survey found no evidence that AI adoption is reducing hiring.

This does not mean displacement is impossible or that it will not accelerate. It means the scale of current fear is significantly outrunning the scale of current evidence. A Reuters/Ipsos poll from August 2025 found 71% of Americans feared AI could put people out of work permanently. Mercer’s Global Talent Trends 2026 survey of 12,000 workers worldwide found that employee concerns about losing their job to AI jumped from 28% in 2024 to 40% in 2026 — a 43% increase in two years. That is a fear trajectory running well ahead of any corresponding displacement trajectory in the actual employment data. The question worth asking is why.

The Layoff Excuse Problem

To understand the gap between fear and evidence, it helps to look at how AI is actually being cited in corporate layoff announcements — because that is one of the primary channels through which the narrative reaches workers.

Challenger, Gray & Christmas data shows that employers announced more than 1.2 million job cuts in 2025 — the highest total since 2020 — and AI was cited in approximately 55,000 of them, or 4.5%. That number is not zero, and it represents real people with real job losses. But it is also notably smaller than the impression conveyed by the cumulative weight of headlines citing AI as the driver of workforce restructuring. The pattern of how those 55,000 are publicly described has amplified the signal far beyond its share of actual cuts.

Deutsche Bank analysts warned explicitly that companies attributing job cuts to AI should be taken “with a grain of salt,” and that “AI redundancy washing will be a significant feature of 2026.” The phenomenon has a now-standard analysis: companies that overhired during the pandemic, that face margin pressure from rising rates, or that need to restructure for entirely conventional business reasons, find that invoking AI as the stated rationale achieves two things simultaneously. It frames the cut as forward-looking and strategically necessary rather than as a management failure or a financial squeeze. And it signals to investors and analysts that the company is engaged with the technology they care about most. Marc Andreessen said it plainly on a podcast in early April 2026: AI is “the silver-bullet excuse” for layoffs that are really about pandemic overstaffing.

Even Sam Altman, whose company is the primary engine of the underlying technology, acknowledged the distortion. His assessment: “There’s some AI washing where people are blaming AI for layoffs that they would otherwise do.” The CEO of OpenAI saying this publicly is not a minor data point. It is the source of the underlying technology acknowledging that its name is being borrowed for a purpose it does not fully serve.

Scott Dylan of NexaTech Ventures describes the downstream consequence clearly: “If you’ve been told your job was eliminated because of AI, and you can see the company doesn’t have the AI to do what you were doing, that breeds a level of distrust that’s incredibly difficult to recover from.” That distrust is not incidental. It is the psychological residue of a narrative being used to make organizational decisions feel more legitimate than they are.

Productivity Gains Without Pay

The most structurally significant dimension of the AI-and-labor story is not the one that generates the most headlines. It is not mass unemployment — which the data does not yet support. It is the question of who captures the productivity gains that AI does generate. And on that question, the data is considerably less reassuring.

PIMCO’s analysis of U.S. labor data through Q3 2025 found that labor’s share of income fell to a record low in a dataset stretching back nearly eight decades — even as productivity continued to grow. The mechanism is not mysterious: when capital can substitute for labor through technology, and when workers lack the bargaining power to claim a share of the resulting gains, productivity growth and wage growth decouple. The pie gets larger. The workers’ slice does not.

EPI economists Josh Bivens and Ben Zipperer have argued in peer-reviewed work that the framing of AI as an independent threat to workers — a technological force that arrives from outside and disrupts labor regardless of policy context — conveniently diverts attention from the real driver of wage stagnation: the accumulated result of policy decisions that have weakened workers’ bargaining position over decades. Union membership declining, minimum wage erosion, and sustained high unemployment are the causes of rising inequality. AI is a new tool that can be deployed in the context of that existing imbalance — either to extend it further, or to challenge it, depending entirely on who has the power to direct its use.

The practical implication is that a worker who accepts reduced pay, a heavier workload, or a weakened bargaining position because they believe the alternative is replacement by AI, is responding to an incentive structure that management has every reason to maintain. Fear of displacement is not a neutral psychological state. It is a management condition — one that increases compliance, reduces bargaining demands, and justifies asking more from fewer people at the same or lower cost.

How Anxiety Functions as a Management Tool

ADP’s Today at Work 2026 report, surveying workers across the global economy in late 2025, found that only 18% of frontline workers felt their jobs were secure. Frontline managers were only marginally better at 21%. That is not a workforce preparing to advocate for itself. It is a workforce managing anxiety.

The same report found that workers who feel their employers are investing in their skills were 5.3 times as likely to feel their jobs were secure. That finding is instructive because it identifies the specific lever: information and investment in workers’ futures reduces fear. The absence of that investment — which is the more common condition — leaves the fear unchallenged and ambient. Gallup data shows that while 44% of employees report AI is already being used in their workplace, only 22% say leadership has explained how it will be applied. The information vacuum is not accidental. Ambiguity about AI’s role, left unfilled by leadership, defaults to worst-case interpretation — which is exactly the interpretation that reduces workers’ willingness to push back.

Resume Now’s 2025 AI Disruption Report, surveying more than 1,000 U.S. workers, found that 89% expressed concern about AI’s impact on their job security, and 54% said their employer was only “somewhat transparent” about AI adoption plans. The combination of high anxiety and low information is not a coincidence produced by managerial negligence. For a meaningful portion of organizations, it is a preferred state. Workers who are anxious about replacement are workers who are not pushing for wage increases, not organizing, not filing grievances, and not comparing notes about what the productivity gains they are generating are actually worth.

The History This Echoes

The dynamic is not new. Automation fear has been used as a management instrument across every major technological transition in industrial history. The specific sequence — new technology arrives, productivity projections are maximized in public communication, workers are told the alternative to accepting current conditions is elimination, gains accrue to capital while labor is asked to be grateful for continued employment — is recognizable from the mechanization of textile manufacturing, the introduction of assembly-line automation, the computerization of clerical work, and the offshoring wave of the early 2000s, each of which generated its own version of the AI-will-take-your-job narrative appropriate to its era.

What each of those transitions actually showed, in retrospect, is that technology alone did not determine outcomes for workers. Institutional context did. EPI’s analysis of historical productivity data finds that when workers have genuine bargaining power — through union density, tight labor markets, or robust labor law — productivity gains from new technology tend to translate into wage growth. When they do not, gains accrue to capital regardless of whether the underlying technology is a steam loom or a large language model. The technology is not the variable. The power balance is.

This does not mean AI’s long-term effects on employment are benign or that worker anxiety is irrational. The WEF’s Future of Jobs Report 2025 projected 92 million roles displaced and 170 million created by 2030 — a net positive that conceals substantial short-term disruption concentrated in specific occupations and demographics. The 41% of employers globally who plan to reduce their headcount in areas where AI can automate tasks within five years represents a real near-term pressure that is not adequately captured by aggregate net job creation figures. The concern is legitimate and the disruption is coming.

What the Narrative Obscures

The problem with the “AI will take your job” framing — in its most amplified, unqualified form — is not that it is entirely false. It is that it substitutes a general technological inevitability for the specific set of decisions that are actually determining outcomes: decisions about whether AI productivity gains are shared with workers or retained as margin, decisions about whether workers have input into how AI is deployed in their roles, decisions about whether the AI-driven restructuring happening inside organizations is genuinely driven by new capability or by older cost pressures wearing new branding.

The Center for American Progress notes that workers who have collective bargaining power — the Writers Guild’s AI provisions being the most-cited example — have been able to negotiate real constraints on how AI is deployed in their workplaces. The California warehouse workers who pushed through quotas legislation in response to AI-generated productivity targets represent a different model of response to the same technological pressure. The outcomes in those cases were not determined by the technology. They were determined by whether workers had organized power to shape how the technology was used.

The question workers should be asking in 2026 is not “will AI take my job?” It is a narrower, more actionable set of questions: Is the productivity AI generates in my workplace showing up in wages? Who decided how AI would be implemented and what was the worker input into that decision? When my employer cites AI as the rationale for a workforce decision, is that accurate, or is it the convenient branding that Deutsche Bank analysts warned about? The first question produces anxiety. The second set produces leverage.

Fear, kept at the right temperature, serves a purpose that has nothing to do with preparing workers for the future. It produces a workforce too busy managing its own existential dread to notice what is being decided above it. We have argued that the AI hype cycle eventually corrects itself at the technology layer. It is less clear that the fear cycle corrects itself in labor markets without active effort. The correction there requires workers who understand, precisely, what the fear is for.

Comments

No comments yet. Be the first to share your thoughts.

Sign in or create an account to leave a comment.