Why this argument now

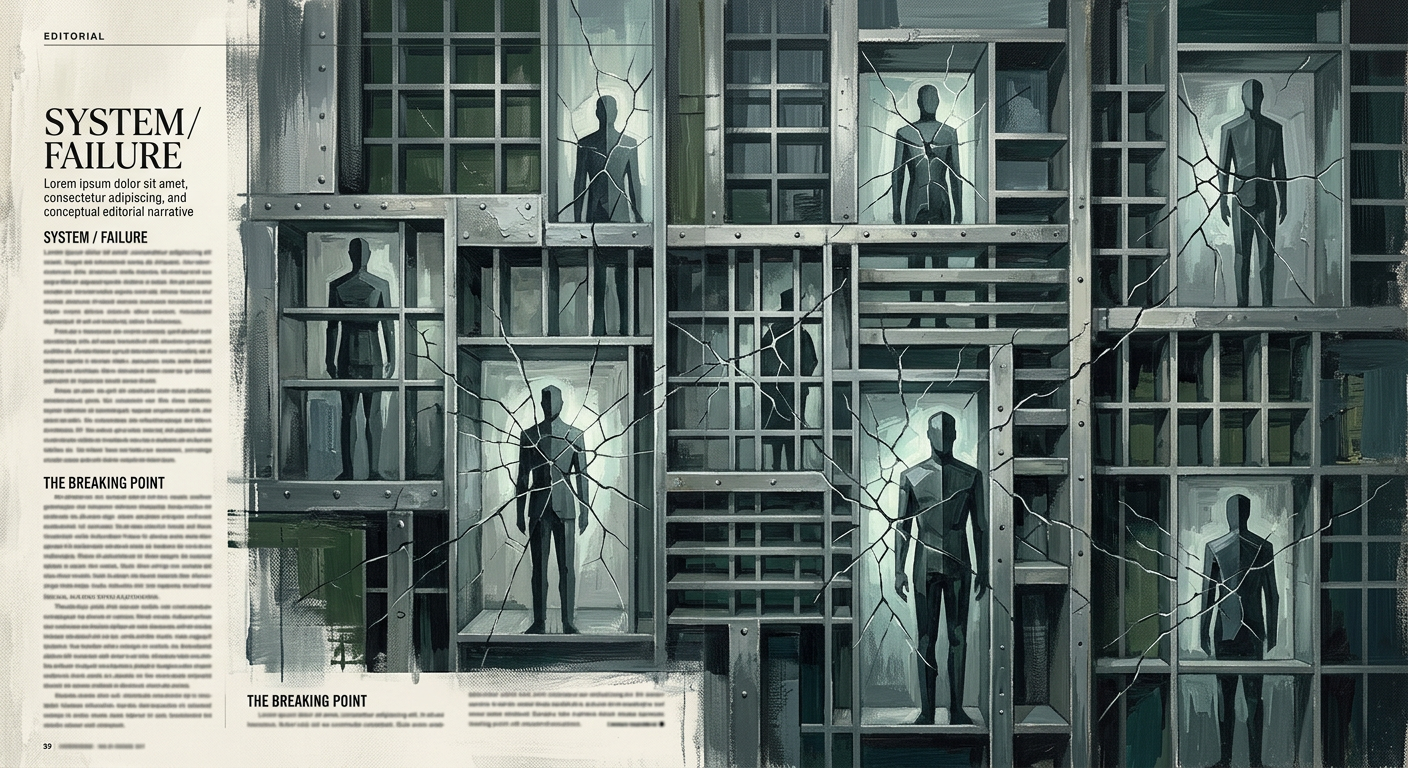

Enterprise AI adoption narratives consistently blame culture, fear, and change resistance. But the data from Deloitte, McKinsey, Gartner, IBM, and Cloudera/HBR in 2025 and 2026 tells a more specific story: the blockers are structural � data fragmentation, legacy infrastructure, the pilot-to-production gap, and governance frameworks that arrived before anyone had a deployment playbook. This essay names them clearly.

The standard story about why enterprises are slow to adopt AI goes like this: people are afraid of change, middle management is protecting turf, legacy cultures resist disruption, and the real problem is human psychology rather than technology. This narrative is satisfying because it is unfalsifiable and because it places the solution � cultural transformation � conveniently outside the reach of any single quarterly plan. It is also, as a primary explanation, wrong.

The data accumulated across 2025 and 2026 from Deloitte, McKinsey, IBM, Gartner, and Cloudera describes a different picture. Enterprises are not slow to adopt AI because their people are scared. They are slow because their foundational infrastructure � data architecture, legacy system integration, governance frameworks, and the organizational wiring that connects pilots to production � was never built for what AI actually requires. The blocker is structural, not psychological. And that distinction matters enormously for what you do about it.

The Culture Blame Loop

The cultural explanation for slow enterprise AI adoption is not invented. Change resistance is real, workforce anxiety about automation is documented, and leadership ambivalence has measurably delayed deployment in specific organizations. Writer’s 2026 enterprise AI survey � a number that appears, at first reading, to confirm the culture story. But read the same survey carefully and a different pattern emerges. The tearing apart is happening at companies that are actively investing: 59% of the organizations reporting internal friction are spending over a million dollars annually on AI technology. The problem is not reluctance. It is that the organizational infrastructure required to absorb AI at scale does not yet exist in most enterprises, and the friction appears when deployment pressure collides with that structural absence.

Writer’s follow-up finding was that 79% of organizations still face AI adoption challenges � a double-digit increase from 2025 � despite near-universal executive belief in AI’s strategic importance. That is the tell. When belief is high and investment is committed but adoption is still failing at scale, the problem is not belief or commitment. Something in the execution environment is broken, and broken execution environments are infrastructure problems, not culture problems.

The culture explanation is also, practically speaking, a paralysis narrative. If the blocker is human psychology, the solution is a transformation program that takes years and requires sustained leadership commitment that most organizations cannot sustain across multiple budget cycles. If the blocker is infrastructure, the solution is an engineering and governance problem that can be scoped, resourced, and resolved. That difference in practical consequence is reason enough to get the diagnosis right.

The Data Foundation Nobody Wants to Own

The most specific and least discussed structural blocker is data readiness. Not data science talent, not AI model selection, not model quality � but whether the underlying enterprise data, which has accumulated over decades across incompatible systems, is in a condition that allows AI to do anything useful with it.

The Cloudera and HBR report found that only 7% of enterprises describe their data as completely ready for AI adoption, while 27% say their data is not very ready or not ready at all. This is happening at the same moment enterprises are accelerating AI initiatives � not before them, and not in spite of them. The gap between AI ambition and data readiness is not narrowing. It is widening.

Gartner’s February 2025 research. That number is not a readiness score from organizations that have not yet started thinking about AI. It is a measurement of organizations that are actively trying to deploy AI and discovering, mid-project, that their data foundations cannot support what they are attempting to build. Gartner’s estimate.

The underlying cause is not neglect. It is accumulation. Enterprise data environments are the archaeological record of twenty-plus years of software acquisitions, system migrations, departmental tool choices, and organizational restructurings. Sales lives in one CRM. Finance lives in an ERP that was customized beyond recognition during an implementation that ended in 2011. Customer support tickets are in a platform that was acquired and re-platformed twice. None of these systems were designed to share a consistent, trusted view of information with an AI that needs to reason across all of them simultaneously. IBM’s AI-ready data analysis. IBM’s CEO study. That is not a culture statistic. It is an infrastructure outcome.

Legacy Infrastructure Is Not a Metaphor

When enterprise leaders talk about legacy infrastructure as a barrier to AI adoption, the instinct in most AI coverage is to treat it as euphemism � a polite way of describing organizational inertia. It is worth being more precise, because the mechanism is specific and the economics are harder than the conversation usually acknowledges.

Deloitte’s 2026 State of AI report. That is not a soft cultural barrier. It is a technical constraint with a specific monetary cost attached. A Metosys analysis. The same report notes that over 75% of ERP-related AI projects stall specifically at integration boundaries � not at the model layer, not at the prompt engineering layer, but at the point where the AI needs to reach into systems that were not built to be reached into.

The economics here deserve more attention than they typically receive. A company that wants to deploy an AI agent to automate procurement workflows cannot simply install a new tool. It needs to connect that tool to an ERP system whose vendor may no longer exist, whose data schema was customized by a consulting firm that no longer has the documentation, and whose integration interfaces were designed for point-to-point connections that predate the API economy. The cost of preparing that environment � not the cost of the AI model, but the cost of making the enterprise’s existing systems AI-compatible � is often larger than the AI deployment itself. That cost does not appear in most AI vendor conversations, which is one reason the gap between what gets sold and what gets deployed remains so persistently wide.

The Pilot Trap

There is a specific failure mode in enterprise AI that is distinct from both data readiness and legacy integration, and that requires its own diagnosis: the pilot-to-production gap. It is the most demoralizing structural problem in enterprise AI because it creates the experience of near-success � the pilot worked, the demo was impressive, the use case is clear � followed by a failure that is genuinely hard to locate and explain.

McKinsey’s 2025 State of AI research. The parallel finding from MIT is independently consistent: only approximately 5% of AI pilot programs generate measurable P&L impact. McKinsey’s broader finding. The usage numbers are high; the scaling numbers are not.

The reason pilots succeed and production deployments fail is architectural, not motivational. S&P Global Market Intelligence data. The four failure modes that a pilot does not surface � data inaccessibility at scale, integration rigidity under production load, API latency across real enterprise systems, and governance gaps that only manifest when real decisions are being made � are exactly the failure modes that production exposes. A pilot runs against a clean, curated dataset in a controlled environment with a dedicated engineering team and a sympathetic stakeholder. Production runs against the actual enterprise data environment, with real users, real edge cases, and real compliance requirements. Those are different tests.

StackAI’s 2026 enterprise AI analysis makes the shift explicit: the limiting factor has moved from “can we access a model?” to “can we operationalize this safely across the organization?” � and that second question is an infrastructure and governance question, not a model quality question. Enterprises that are pulling ahead are building standardized deployment stacks � model gateways, retrieval patterns, evaluation pipelines, reusable tool connectors � not simply selecting better foundation models. The differentiator is operational architecture, not AI capability.

Governance Arrived Before Anyone Had a Playbook

The regulatory dimension of enterprise AI slowdown is also structural, and also routinely misread as cultural. The complaint that “legal keeps blocking AI initiatives” is real and widespread, but it describes a symptom, not a cause. The cause is that mandatory governance obligations arrived faster than enterprise deployment playbooks could be developed, and organizations are now doing the remediation work that should have preceded deployment in parallel with deployment itself.

The EU AI Act timeline matters here: it entered into force in August 2024, becomes fully applicable in August 2026, and carries fines reaching �35 million or 7% of global annual turnover for non-compliance. General-purpose AI obligations have been live since August 2025. The governance market forecast shows the consequence: enterprise AI governance and compliance is projected to grow from $2.2 billion in 2025 to $11 billion by 2036. That market exists because organizations were deploying AI without governance frameworks, regulators responded with enforceable requirements, and enterprises are now building retroactively what they should have built first.

The governance delay creates a specific organizational dynamic. When legal and compliance teams flag AI projects as high-risk, they are frequently correct � not because AI is inherently dangerous, but because the deployment was designed without the documentation, audit trail, explainability layer, or data provenance that regulated environments require. The fix is not to override compliance. It is to build governance into the deployment architecture from the beginning, which is something most organizations did not do during the 2023�2024 pilot wave and are now paying the integration cost to retrofit.

The Gap Between Access and Adoption

There is one more structural layer that deserves naming: the gap between tool access and actual workflow integration. It is different from data readiness and legacy infrastructure, and it is the structural problem that is most often mislabeled as a culture problem.

Deloitte’s 2026 worker-access finding shows the same structural gap: sanctioned AI access rose by 50% in a year � from under 40% to under 60% � but fewer than 60% of workers with access use AI in their daily workflow. That gap � between having access and integrating into daily work � is not primarily explained by resistance or fear. It is explained by the absence of workflow redesign. Tools were procured and deployed. The workflows they were supposed to change were not redesigned to accommodate them. Workers were given access to AI without being given new processes that made AI use the path of least resistance.

BCG’s 2025 worker survey. Access is rising. Embedding is lagging. The reason is not individual resistance but the absence of structured workflow change � which is an organizational design and management problem, not a psychology problem. A Gallup survey. You cannot integrate AI into daily work through strategy gaps and hope.

The Problem Is Solvable, Which Is the Point

The reason this structural diagnosis matters more than the cultural one is not just analytical precision. It is practical consequence.

Cultural transformation at enterprise scale is a multi-year program with uncertain outcomes, deep dependency on leadership continuity, and no guaranteed connection between effort and result. Infrastructure problems � data fragmentation, legacy integration debt, governance architecture gaps, workflow redesign � are engineering and management problems. They have scopes. They have sequencing. They have defined milestones. Organizations that have cleared them are producing measurable outcomes: AmplifAI reports. The leaders are not culturally different from the laggards. They are architecturally different.

The most important reframing for enterprises that are genuinely trying to move faster is this: the question is not “how do we get our people to embrace AI?” The question is “what in our infrastructure is preventing AI from being useful when our people try to use it?” That second question has answers that can be found, prioritized, resourced, and solved. The first question mostly produces retreats and keynote speakers.

The enterprises that crack enterprise AI adoption at scale over the next three years will not be the ones that ran the best change management programs. They will be the ones that spent the unglamorous time building clean data pipelines, retiring the integration debt in their ERP environments, standing up governance frameworks before regulators made them, and redesigning workflows rather than just deploying tools into existing ones. None of that is a story that sells conference passes. All of it is what actually works.

Comments

No comments yet. Be the first to share your thoughts.

Sign in or create an account to leave a comment.