OpenAI is making a more direct push into scientific research with GPT-Rosalind, a frontier reasoning model built for biology, drug discovery, and translational medicine. The launch matters not only because it introduces a domain-specific model series, but because it shows how OpenAI is starting to package frontier systems around high-value professional workflows rather than only broad consumer use cases.

In practical terms, GPT-Rosalind is aimed at the slowest and most expensive part of the pharmaceutical pipeline: early discovery. OpenAI argues that better evidence synthesis, stronger hypothesis generation, and more structured experimental planning could help researchers move faster long before a candidate ever reaches clinical trials or regulators.

OpenAI Is Targeting the Earliest Bottlenecks in Drug Discovery

The company frames life sciences as a workflow problem as much as a reasoning problem. Researchers work across literature, specialized databases, lab outputs, sequence data, and evolving biological hypotheses, often in processes that are fragmented and difficult to scale. GPT-Rosalind is designed to sit inside those loops and help scientists reason across molecules, proteins, genes, pathways, and disease-relevant biology in a more connected way.

That positioning is notable. OpenAI is not pitching GPT-Rosalind as a magic replacement for wet-lab science. It is pitching the model as a system for narrowing the search space earlier, so teams can test better ideas sooner and waste less time on weak hypotheses. For enterprise research organizations, that is a more credible and commercially relevant story than vague promises about AI transforming science overnight.

Source: OpenAI Blog

Source: OpenAI Blog

The Model Launch Comes With Benchmarks and a Codex Plugin

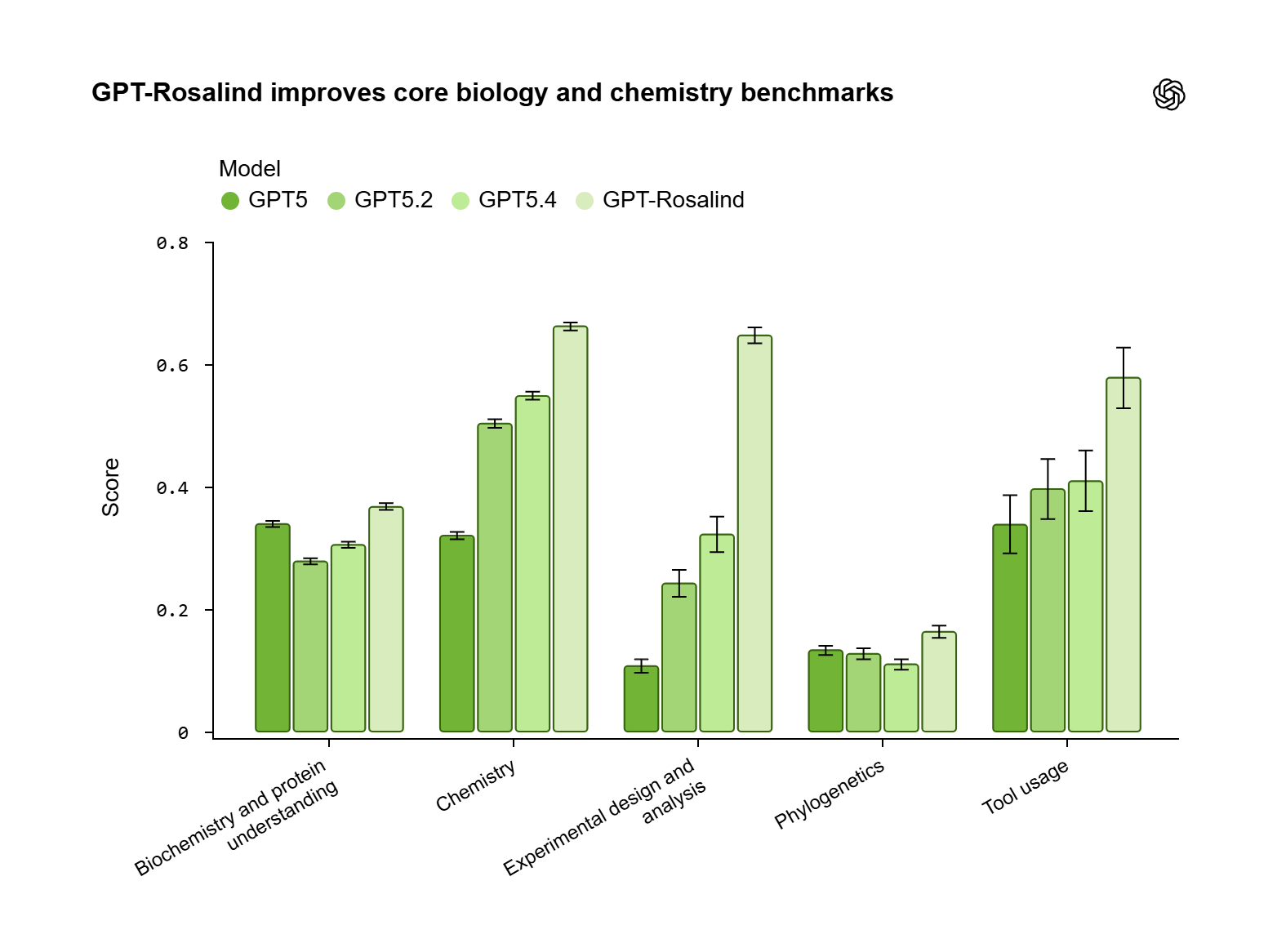

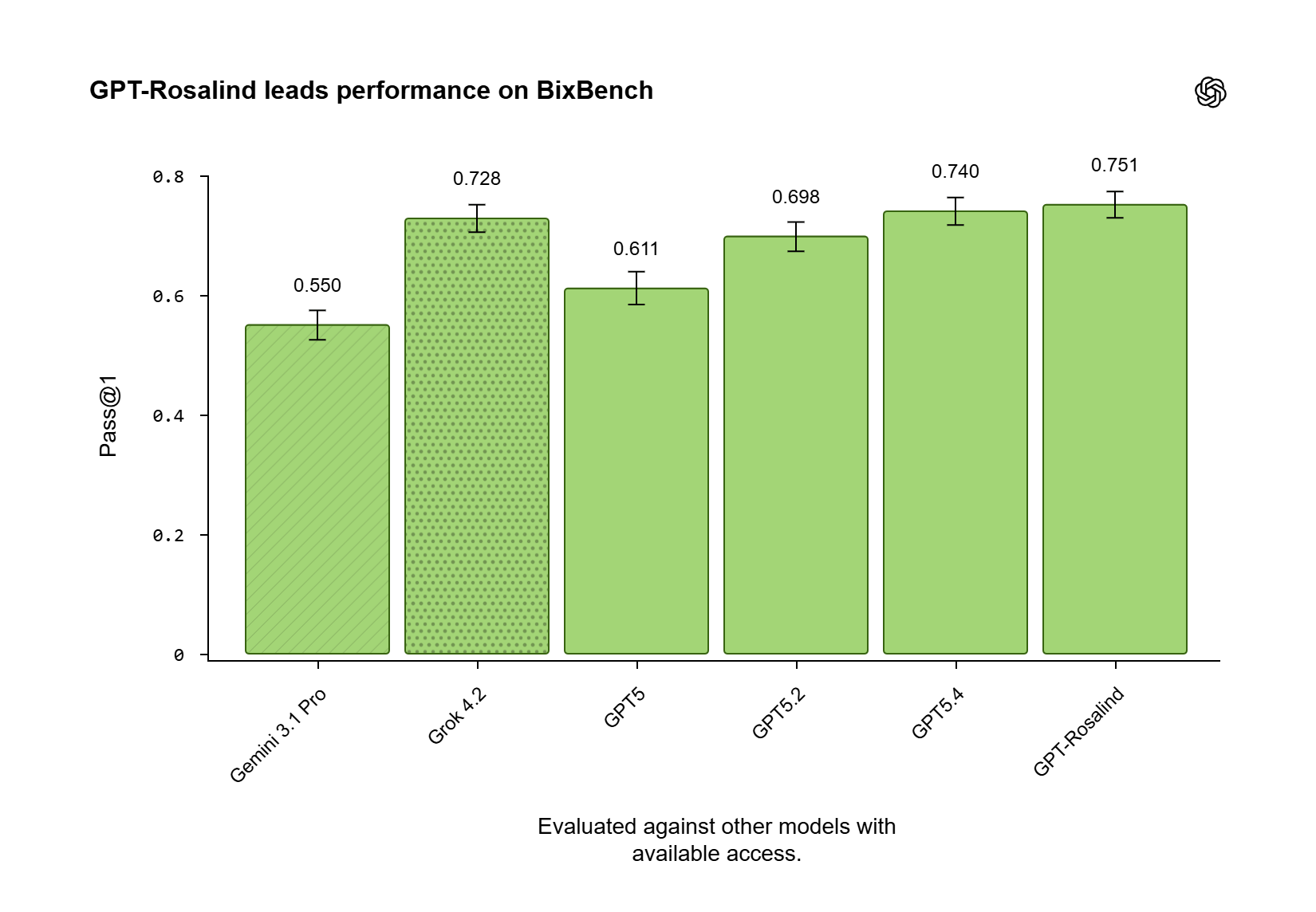

OpenAI says GPT-Rosalind leads on BixBench and outperforms GPT-5.4 on 6 of 11 LABBench2 tasks, with especially strong gains on cloning-related workflows. The company also highlighted an evaluation with Dyno Therapeutics, where best-of-ten model submissions in the Codex app ranked above the 95th percentile of human experts on one RNA prediction task and around the 84th percentile on a sequence generation task.

Those numbers should still be read as directional rather than definitive proof of lab-world impact. But they do suggest OpenAI is trying to validate the system against real research workflows instead of limiting the launch to abstract model capability claims.

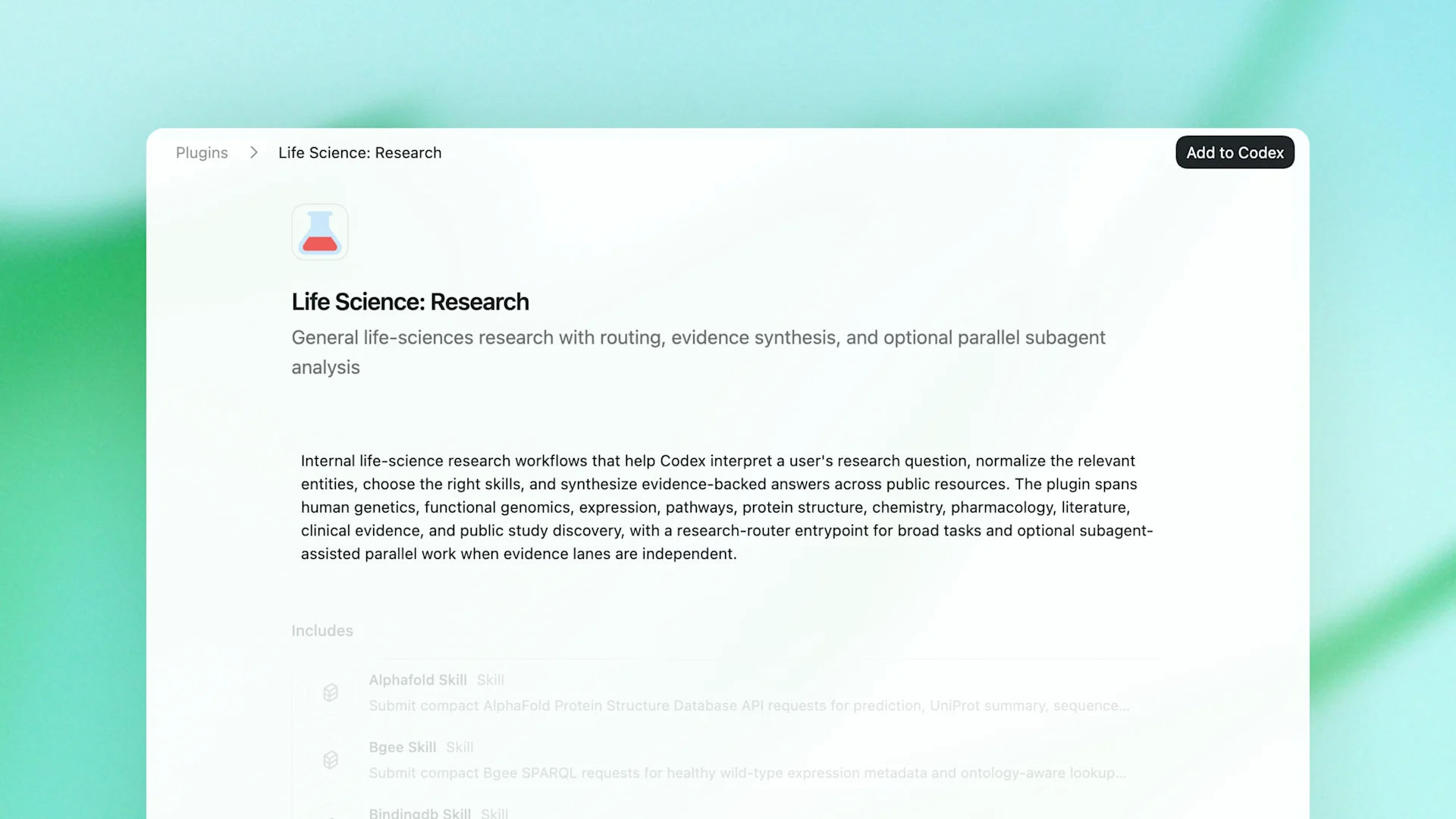

To make the model more usable in practice, OpenAI is also releasing a Life Sciences Research Plugin for Codex. The package connects models to more than 50 public biology tools, literature sources, and multi-omics databases, giving researchers a more operational way to move from broad questions to concrete follow-up work.

Source: OpenAI Blog

Source: OpenAI Blog

Source: OpenAI Blog

Source: OpenAI Blog

Trusted Access Is Part of the Product Story

OpenAI is not rolling GPT-Rosalind out as a general public model. The system is available as a research preview in ChatGPT, Codex, and the API for qualified customers through a trusted-access structure that starts with U.S. Enterprise users. That means eligibility, governance, access controls, and misuse prevention are part of the launch package, not an afterthought.

This is where the story intersects with Responsible AI more clearly. Life sciences is one of the domains where stronger model capability creates both upside and real misuse risk. OpenAI’s answer is a gated deployment model paired with enterprise-grade security controls and a qualification process that researchers can start through its access request form.

The company is also surrounding the product with a services layer. OpenAI says its Life Sciences team, along with advisory partners including McKinsey, BCG, and Bain, will help customers identify use cases and integrate the model into governed research environments. That signals a familiar enterprise pattern: the product is the model, but the go-to-market motion is closer to consultative transformation.

Why GPT-Rosalind Matters Beyond This Single Release

The deeper significance is strategic. GPT-Rosalind suggests OpenAI sees domain-specific reasoning models as a meaningful next step for frontier AI, especially in industries where tool use, long-horizon workflows, and high-stakes decision making matter more than general chatbot fluency. If that approach works in life sciences, it could become a template for other tightly governed professional domains.

OpenAI says this is only the first release in its GPT-Rosalind series, which leaves plenty unanswered about pricing, broader availability, and how much measurable scientific lift the system can deliver outside curated evaluations. But the direction is already clear: OpenAI wants frontier AI to become an active research partner inside enterprise discovery workflows, not just a writing assistant parked beside them.

You can also see OpenAI’s launch post on X here.

Introducing GPT-Rosalind, our frontier reasoning model built to support research across biology, drug discovery, and translational medicine. pic.twitter.com/PubLU0FkSv

— OpenAI (@OpenAI) April 16, 2026

Organizations that want to explore access can start with OpenAI’s qualification and safety review process or contact the company’s sales team for enterprise discussions.

Comments

No comments yet. Be the first to share your thoughts.

Sign in or create an account to leave a comment.