Mistral AI is trying to solve one of the least glamorous, most expensive problems in enterprise AI: getting model-powered processes to run reliably after the demo works. The company has released Workflows in public preview, describing it as an orchestration layer for AI systems that need durability, observability, fault tolerance, and human review.

That framing matters because many companies are no longer blocked by access to capable models. They are blocked by the production machinery around those models: retries, approvals, audit trails, long-running state, deployment boundaries, and a clear way to see what happened when an automated process fails.

Mistral Is Targeting the Production Gap

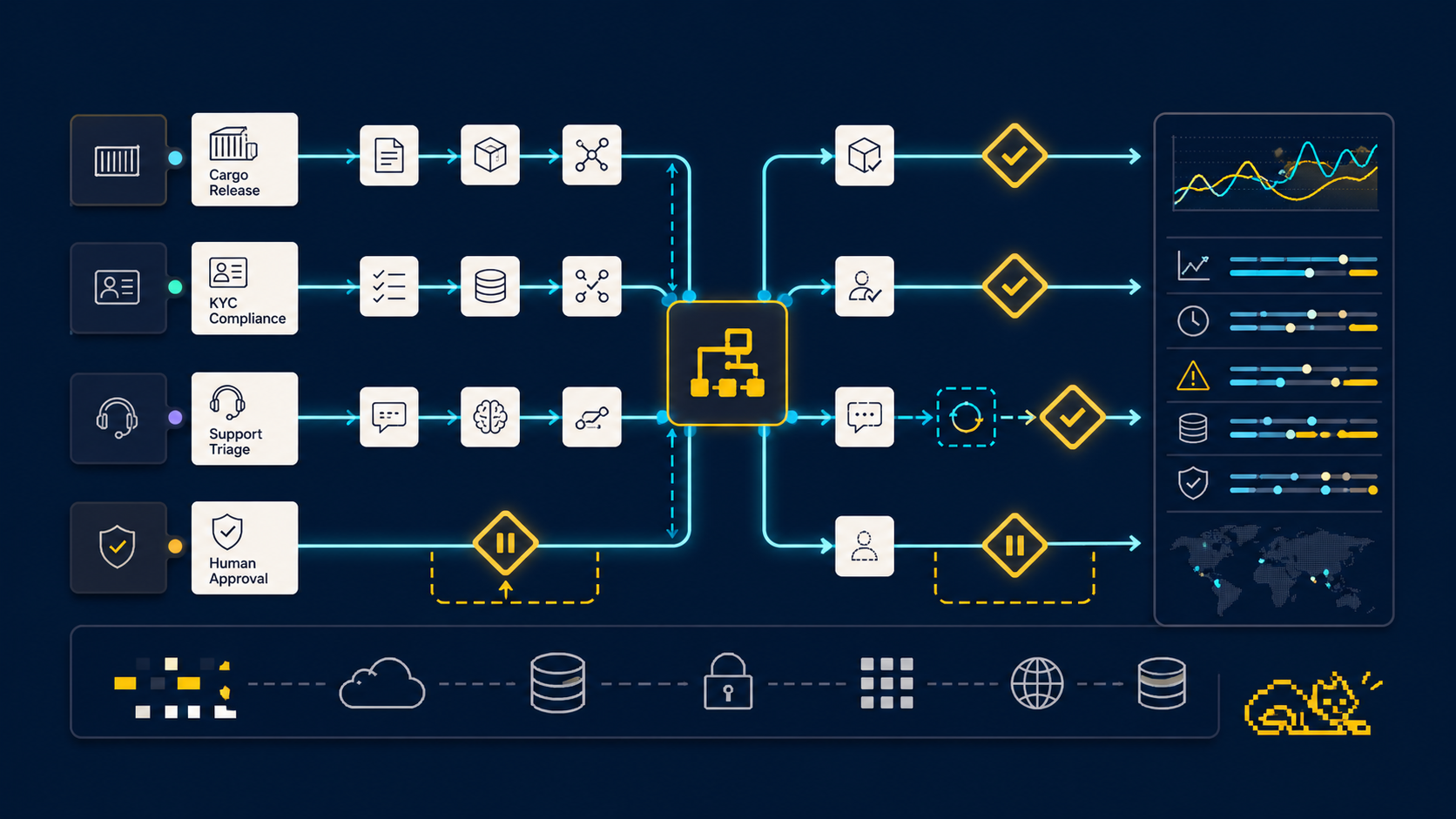

According to Mistral’s announcement, Workflows is part of Studio and lets developers write business processes in Python, then publish them to Le Chat so business users can trigger them. Every step is tracked in Studio, creating a timeline that teams can inspect for auditability, debugging, and operational review.

The core argument is familiar to anyone who has tried to move AI automation past a prototype. A notebook or internal script can prove that a process is possible, but production introduces harder requirements. A process may need to survive a network timeout, pause for a human decision, resume hours later without losing state, and explain exactly which branch or retry produced a final outcome.

Mistral says Workflows addresses that with durable execution, observability, and built-in human-in-the-loop approval. In practical terms, a developer can write the business logic while the platform handles state, tracing, retries, timeouts, rate limits, and approval pauses around it.

The Use Cases Are Operational, Not Decorative

Mistral’s examples are deliberately mundane, which is the point. Cargo release automation, document compliance checking, and support triage are not flashy consumer AI demos. They are the kinds of repetitive, regulated, multi-step workflows where enterprises can justify automation only if the system is reliable enough to trust.

For cargo release, Mistral describes a workflow that validates shipping documents against customs rules, checks for anomalies, pauses for human sign-off where needed, and then releases cargo after approval. The important detail is the pause. A wait_for_input() step can stop the workflow without consuming compute, notify the reviewer, and resume from the same point when a decision arrives.

For compliance checks, the company points to KYC reviews that require identity extraction, sanctions and PEP screening, jurisdiction-specific requirements, and structured risk assessments. The value proposition is not just speed. It is the ability to produce a traceable account of the steps and evidence behind a decision.

For support triage, Mistral focuses on correctability. Automated routing will sometimes classify a ticket incorrectly. Workflows is meant to expose the routing decision in Studio, so a team can inspect and adjust the workflow rather than treating the model as a black box.

🆕 Today, we're releasing the public preview of Workflows, the orchestration layer for enterprise AI.

— Mistral AI (@MistralAI) April 28, 2026

🌎 Enterprise teams have capable models. What they don't have is a way to run them reliably in production. That's the gap Workflows fills. It takes AI-powered business processes… pic.twitter.com/ETMYDI9Isg

Temporal Under the Hood Gives Mistral a Serious Backbone

Under the hood, Workflows is built on Temporal’s durable execution engine, which Mistral notes is used for orchestration at companies including Netflix, Stripe, and Salesforce. Mistral says it has extended that base for AI-specific workloads with streaming, payload handling, multi-tenancy, and observability features that the core engine does not provide out of the box.

The deployment model is split between Mistral and the customer’s own environment. Mistral hosts the orchestration infrastructure, Workflows API, and Studio, while customers deploy workers in their own Kubernetes environments through a Helm chart. That separation is central to the enterprise pitch: business logic and data processing can stay inside the customer’s perimeter, whether that is cloud, on-premises, or hybrid.

This also fits Mistral’s broader strategy. The company is not only selling models. It is building more of the surrounding enterprise platform, from agents and connectors to Studio and Le Chat. Workflows gives that stack a production control layer, which is where many AI projects either become useful or quietly stall.

Enterprise AI Is Becoming an Operations Problem

The larger signal is that enterprise AI competition is shifting from model demos to operational reliability. Companies want agents and automations, but they also want the ordinary engineering guarantees that business-critical software requires: visibility, access control, replayability, deployment flexibility, and the ability to put a human in the loop before a consequential action happens.

Mistral says organizations including ASML, ABANCA, CMA-CGM, France Travail, La Banque Postale, and Moeve are already using Workflows to automate critical processes. That customer list helps frame the product less as an experimental feature and more as an attempt to make agentic AI legible to the people responsible for compliance, operations, and uptime.

The hardest part will be proving that this layer can stay simple enough for developers while satisfying the governance expectations of large organizations. If Mistral can make that balance work, Workflows could become one of the more important pieces of its enterprise AI stack: not the model that answers the question, but the system that makes sure the answer becomes a reliable business process.

Comments

No comments yet. Be the first to share your thoughts.

Sign in or create an account to leave a comment.