Google is trying to turn Gemini’s research agents into something closer to enterprise infrastructure than an advanced summarization tool. With the launch of a revamped Deep Research agent and a new Deep Research Max tier, the company is pitching autonomous research not as a single report generator, but as a flexible foundation for long-horizon workflows that pull from the open web, internal files, remote MCP servers, and specialized professional data streams.

The key shift is not just raw model quality. Google says the new system, built with Gemini 3.1 Pro, can now support more exhaustive context gathering, richer multimodal grounding, collaborative planning before execution, native data visualizations, and tighter integration with custom enterprise sources. That makes the launch especially relevant for teams in finance, life sciences, and market intelligence, where research is rarely limited to public search alone.

Google Is Splitting the Product Into Two Research Modes

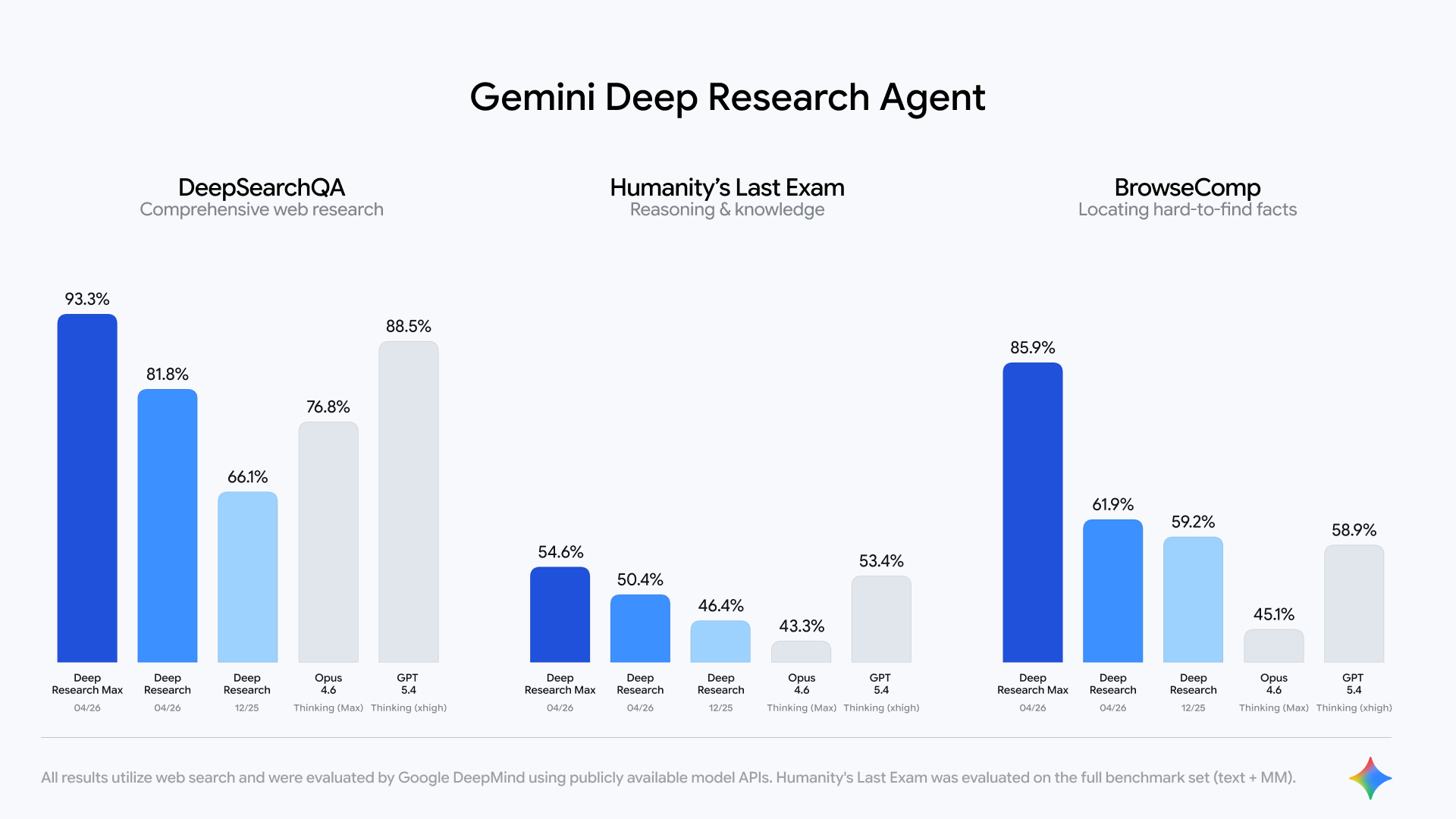

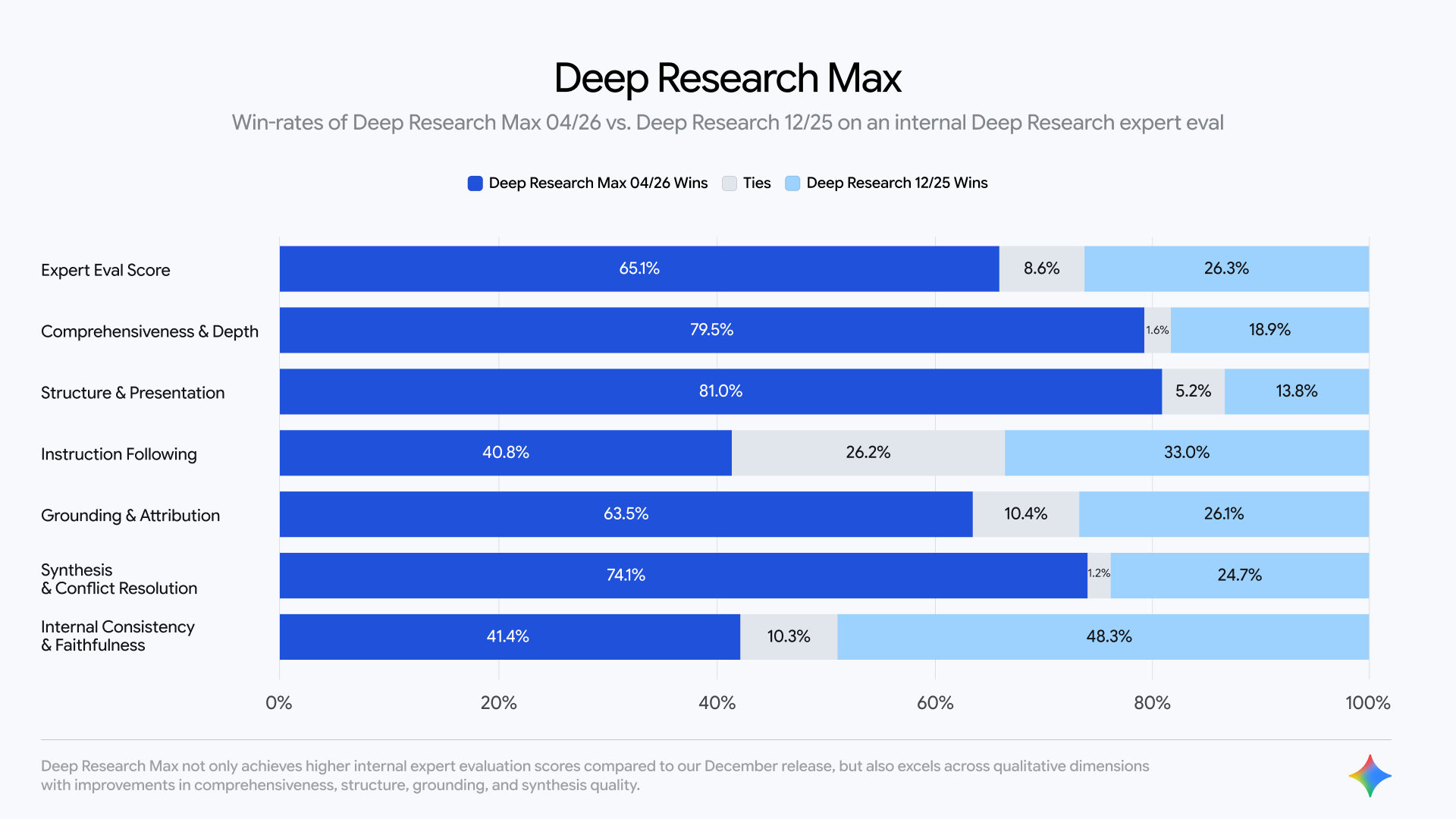

The launch introduces a clearer two-track structure. The standard Deep Research experience is positioned as the faster and more efficient option, built for interactive user-facing experiences where latency and cost matter. Deep Research Max, by contrast, is designed for more exhaustive and higher-quality output, using extended test-time compute to search, reason, and refine more aggressively before producing a final report.

That distinction matters because “autonomous research” is not one workload. Sometimes a product team wants a quick but structured answer inside a live interface. Other times an analyst team wants a slower, more thorough overnight process that wakes up with a fully cited due diligence report. Google is effectively acknowledging that those are different jobs and that one research agent setting cannot serve both equally well.

This also marks a substantial evolution from the earlier Deep Research release Google introduced back in December. The company is now describing the updated system as a first step in broader agentic pipelines, where research does not end at the report itself but becomes a front-end stage in larger automated workflows.

Source: Google Blog

Source: Google Blog

MCP Support Pushes Gemini Into Proprietary Data Workflows

The biggest enterprise signal in the launch is MCP support. Google says Deep Research can now connect to arbitrary remote MCP servers, custom tools, file uploads, connected file stores, and web search in different combinations. In plain terms, the agent is no longer limited to the public internet. It can be pointed at the gated, structured, and highly specific data environments that professionals actually rely on.

That is where the product starts to become more strategically important. An autonomous research system that can only search the public web is useful, but narrow. One that can move between SEC filings, internal documents, premium financial feeds, uploaded PDFs, CSVs, and other multimodal assets starts to look like a serious enterprise research layer.

Google says it is already working with FactSet, S&P Global, and PitchBook on MCP server designs. That matters because these are exactly the kinds of providers that define research quality in regulated and high-stakes environments. If shared customers can wire those sources directly into Gemini-powered workflows, the practical value of the product rises substantially.

Native Charts and Multimodal Grounding Make the Output More Actionable

Another notable addition is native chart and infographic generation. Google says Deep Research can now generate in-line visuals using HTML or Nano Banana, which changes the output from a block of text into something closer to a stakeholder-ready report. That is a meaningful upgrade because a large share of research work is not just about finding the right answer. It is about packaging that answer in a way that decision-makers can absorb quickly.

The platform is also broadening how research can be grounded. Google says users can feed the agent PDFs, CSVs, images, audio, and video, while also choosing whether to combine web access with other tools or turn web access off entirely. Add in collaborative planning before the run begins and real-time streaming of intermediate reasoning summaries, and the system starts to look more controllable than many “agent” products that promise autonomy but reveal very little during execution.

Source: Google Blog

Source: Google Blog

This Is Really a Bet on Agentic Enterprise Research

The broader message is that Google wants Gemini to own more of the knowledge-work stack, not just the chatbot layer. By tying Deep Research and Deep Research Max to the Interactions API, the company is making a case that autonomous research should be something developers can call programmatically, embed in their own applications, and chain into recurring workflows.

That also explains the product positioning around “proven Google scale.” Google says the same research infrastructure already underpins capabilities across products such as Gemini App, NotebookLM, Google Search, and Google Finance. Whether enterprises fully buy into that argument will depend on real-world reliability and factuality, especially in regulated environments where nuance matters more than speed. But as a platform signal, the intent is clear: Google wants Gemini to be the engine that gathers, structures, and visualizes context before other agents or humans make the final move.

The launch is available now in public preview for paid Gemini API tiers, with broader availability for startups and enterprises in Google Cloud promised soon. For developers building long-horizon research systems, the more important question is not whether Gemini can summarize information. It is whether Deep Research Max can become the default context-gathering layer for serious agentic workflows.

Below is Sundar Pichai’s X post highlighting the release:

We are launching two powerful updates to Deep Research in the Gemini API, now with better quality, MCP support, and native chart/infographics generation.

— Sundar Pichai (@sundarpichai) April 21, 2026

Use Deep Research when you want speed and efficiency, and use Max when you want the highest quality context gathering &… pic.twitter.com/rTp7R6w3IT

Comments

No comments yet. Be the first to share your thoughts.

Sign in or create an account to leave a comment.