Anthropic is bringing persistent memory to Claude Managed Agents, making the feature available in public beta for developers building longer-running agentic systems. The move gives Claude agents a way to learn from previous sessions while keeping those memories inspectable, exportable, and manageable through production controls.

That matters because the agent race is shifting from impressive one-off demos to systems that can survive repeated use. For enterprises, the question is no longer just whether an AI agent can complete a task once. It is whether the agent can remember what worked, avoid repeating known mistakes, and still give teams enough control to audit what it retained.

Memory Moves From Chat Feature to Agent Infrastructure

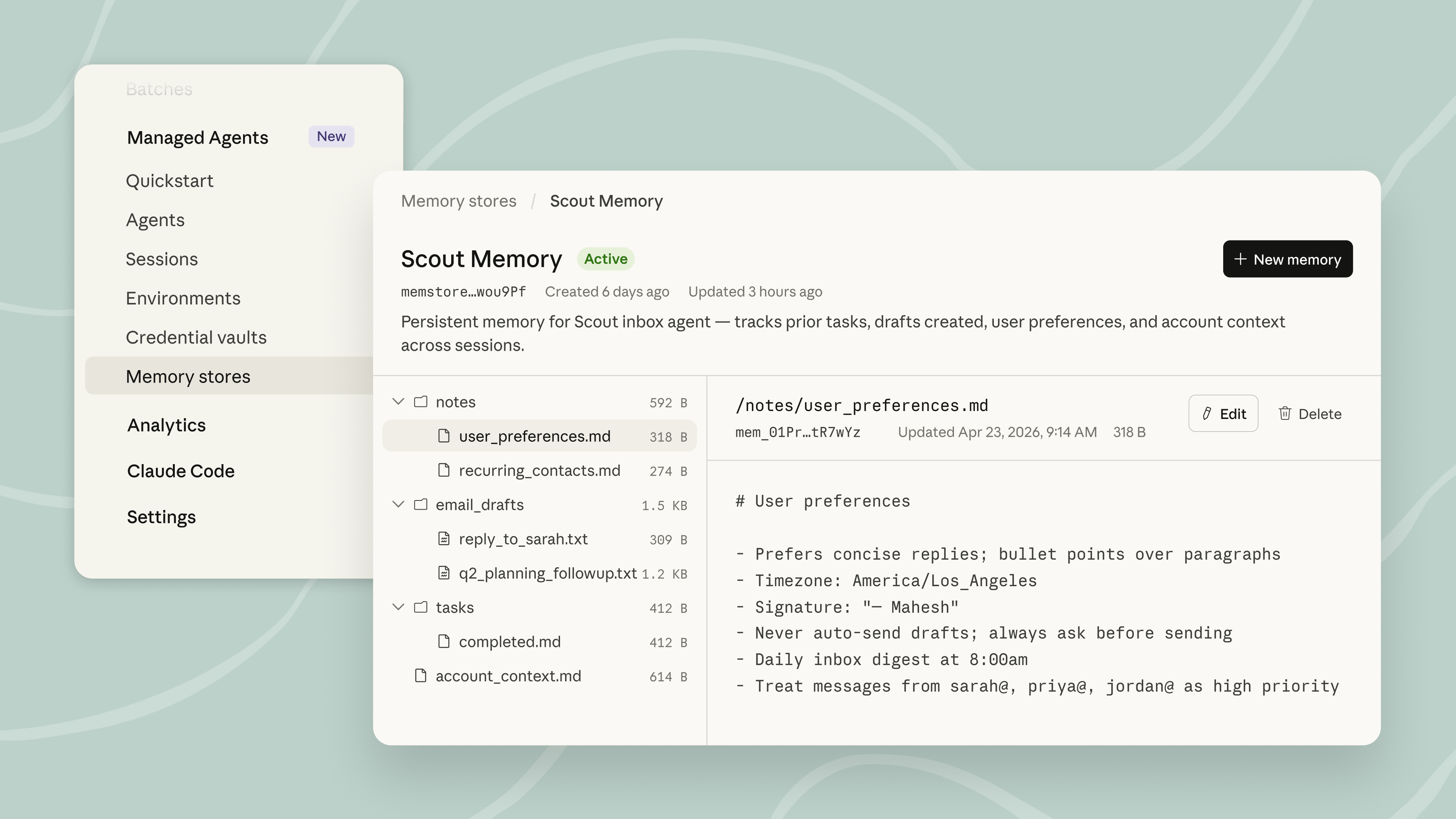

Anthropic describes the new memory layer as optimized for agents that operate across sessions, share lessons with other agents, and improve over time. Instead of treating memory as a vague personalization feature, the company is framing it as infrastructure for managed, production-grade agents.

The technical choice is important: memories are stored as files mounted directly onto a filesystem. That lets Claude use familiar agent tools, including bash and code execution, to read, organize, update, and reason over memory. In practice, this could make memory feel less like a separate retrieval system and more like part of the working environment an agent already uses.

Anthropic says this design gives developers more direct control. Memories can be exported, managed through the API, versioned, rolled back, or redacted from history. That is the kind of detail enterprise teams tend to care about because memory is powerful precisely where it is risky: it preserves context, decisions, corrections, and sometimes sensitive operational knowledge.

Image credit: Claude Blog.

The Enterprise Pitch Is Control, Not Just Convenience

The public beta arrives with features aimed at teams that need governance around autonomous systems. Anthropic says memory stores can be shared across multiple agents with scoped permissions, allowing one store to be read-only for an organization while another is writable for a specific user or workspace. The company also says multiple agents can work against the same memory store without overwriting one another.

That is a practical answer to a real deployment problem. As companies build more agentic workflows, they often end up with brittle custom systems for saving context: prompt files, vector databases, hand-maintained notes, or internal retrieval layers stitched together around a model. Anthropic is trying to absorb part of that stack into the Claude Platform itself.

The benefit is not just reduced engineering effort. A managed memory layer can create a clearer record of what an agent learned and when it learned it. According to Anthropic, changes are tracked through audit logs and surfaced as session events, which means developers can trace a memory back to the agent and session that produced it.

Customer Examples Show Where Memory Pays Off

Anthropic highlighted early users including Netflix, Rakuten, Wisedocs, and Ando. The examples point toward a common pattern: memory is most useful when agents repeat similar work, encounter recurring edge cases, or need to preserve corrections from humans.

Rakuten said its task-based long-running agents used memory to learn from every session and avoid past mistakes, reporting 97% fewer first-pass errors, 27% lower cost, and 34% lower latency. Wisedocs said its document verification pipeline used cross-session memory to remember common document issues and speed verification by 30%. Ando framed the feature more as infrastructure relief, saying memory lets the company focus on its workplace messaging product instead of building memory systems itself.

Those numbers come from Anthropic’s launch materials, so they should be read as customer-reported examples rather than universal benchmarks. Still, the use cases are directionally revealing. Memory becomes valuable when the agent is not starting from zero every time a workflow begins.

Why This Matters for the Agent Market

Built-in memory gives Anthropic another way to differentiate Claude as the agent market matures. The bigger competitive question is not only which model reasons best in isolation, but which platform gives developers the safest path to deploy agents that keep useful context over time.

For teams experimenting with agentic AI, memory can be both a capability boost and a governance headache. An agent that remembers well can become faster, more consistent, and more useful. An agent that remembers carelessly can preserve stale assumptions or sensitive information in ways that are hard to unwind. Anthropic is betting that filesystem-based memory, permissions, audit trails, and API-level control can make that tradeoff easier to manage.

Claude announced the public beta on X:

Memory on Claude Managed Agents is now in public beta.

— Claude (@claudeai) April 23, 2026

Your agents can now learn from every session, using an intelligence-optimized memory layer that balances performance with flexibility. pic.twitter.com/P7GjOYPqCz

The release is less flashy than a new frontier model, but it may be more important for real deployments. If agents are going to become persistent participants in business workflows, they need a durable, inspectable way to learn. Anthropic’s public beta is a step toward making that memory layer part of the product rather than a custom component every team has to build alone.

Comments

No comments yet. Be the first to share your thoughts.

Sign in or create an account to leave a comment.