Definition

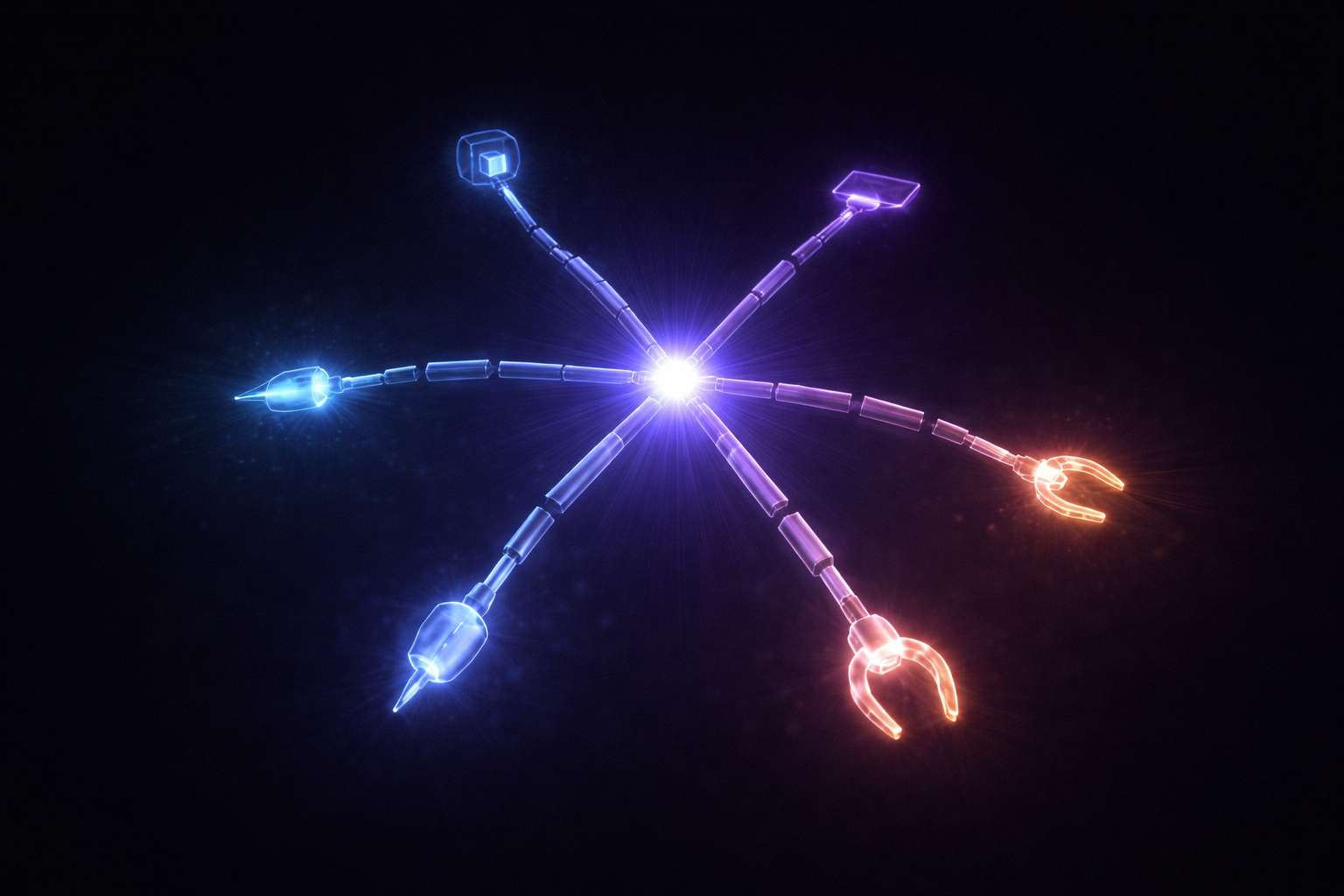

In the world of artificial intelligence, “Tool Use” is the leap from “thinking” to “doing.” A standard Large Language Model is like a brain in a jar—it knows a lot, but it can’t interact with the universe. Tool Use gives that brain “hands.” By using a technique called “Function Calling,” a developer can give the AI a list of tools it is allowed to use, like a “Google Search Tool” or a “Python Interpreter.” When the AI realizes it doesn’t have the answer to a question (e.g., “What is the current price of Bitcoin?”), it stops generating text and instead generates a precisely formatted “tool call” (e.g., get_crypto_price("BTC")). A separate piece of software then executes that call and feeds the result back to the AI, allowing it to provide a real-time, accurate response.

Why It Matters

Tool Use is what makes AI “Useful” for professional, real-world work. Without tools, an AI is limited by its “Frozen” memory—it can’t check the weather, it can’t book a flight, and it can’t solve a math problem more complex than its Pre-Training allows. Tool Use is the foundation for almost every advanced AI system today, from AI Agents to Retrieval-Augmented Generation (RAG).

The significance of tool use is its impact on Reliability and Truth. One of AI’s biggest problems is Hallucination—where a model confidently makes up a fact. If an AI has a “Calculator Tool,” it doesn’t have to “guess” the result of a math problem; it just uses the tool and gets the 100% correct answer every time. This transforms the AI from a creative writer into a dependable logic engine that can operate within the rigid rules of math, finance, and engineering. As more apps become “Agentic,” the ability for an AI to seamlessly “reach out” and use our existing software world is becoming the most important interface in technology.

How It Works

Tool Use works through a sophisticated “Loop” between the AI and a host environment.

- System Prompt and Tool Definition: The developer gives the AI a “System Prompt” that includes a JSON-formatted list of tools. Each tool has a name, a description, and a set of “parameters” (e.g., “Wait for the user’s city name before calling the weather tool”).

- Detection and Intent: When a user asks a question, the AI’s Inference engine analyzes it. If the question requires external data, the AI generates a “Stop Token.”

- The Call: The AI writes out the tool call in a specific, machine-readable format.

- Execution and Observation: The host software (the “Environment”) sees the AI’s request, runs the specified code or API call, and captures the result (the “Observation”).

- Final Generation: The AI “reads” the observation and then generates a final, natural-language response based on that data.

This entire process happens in milliseconds, often without the user ever realizing that the AI had to “go out” and use a separate piece of software to get the answer.

Applications

Tool Use is a core part of Software Development. Modern AI IDEs (Integrated Development Environments) like Cursor or GitHub Copilot use “Terminal Tools” and “Linter Tools” to help the AI “see” and “fix” errors in real-time. The AI doesn’t just “guess” why the code won’t run; it uses the terminal to try and run it, sees the error message, and then “acts” to fix it.

In Business and E-Commerce, tool use is behind “Actionable Chatbots.” When you ask a company’s bot to “Where is my order?”, the bot uses a “Shipment Tracking Tool” to look up your order ID in the company’s private database. It can then “use” a “Docusign Tool” to send you a refund form or a “Zendesk Tool” to open a support ticket on your behalf.

For Scientific and Academic Research, researchers give AI “Search Tools” like ArXiv or Google Scholar. Instead of the AI “remembering” a paper from its training data (which might contain errors), it “uses” a search tool to find the exact PDF, “reads” the relevant section, and then provides a cited, perfectly accurate summary. Finally, in Personal Productivity, tool use allows apps like Siri or Gemini to “use” your calendar, email, and maps to “book a dinner reservation” or “find a flight” autonomously.

Limitations

The biggest challenge with tool use is “Tool Frustration.” Sometimes the AI “thinks” a tool exists when it doesn’t (a form of hallucination), or it “calls” a tool with the wrong parameters (e.g., sending a zip code instead of a city name). This can lead to the AI getting “stuck” in a loop, repeatedly trying to use a broken tool without success.

There is also a massive Security Risk. If an AI has a “Python Tool” that lets it run any code, a malicious user could potentially “trick” the AI (a Jailbreak) into running a “Delete All Files” command. This makes Sandboxing—the practice of running AI tools in a secure, isolated environment—and robust Guardrails essential for every developer.

Finally, “Latency and Cost” are major hurdles. Every tool call adds a “Round Trip” to the AI process, making the response significantly slower and more expensive in terms of Inference tokens. As models become more efficient and specialized for tool use (like the “Function Calling” models from OpenAI and Anthropic), these barriers are falling, but they still require careful engineering for any production-ready application.

Related Terms

- AI Agent: An autonomous system that uses tool use as its core way of interacting with the real world.

- Multi-Agent System: A group of specialized agents that share and coordinate different tools to solve a complex task.

- Large Language Model (LLM): The conversational “brain” that “decides” which tool to use and how to interpret the results.

- Inference: The live phase of AI execution where tool use “triggers” and “retrievals” actually happen.

- Chain-of-Thought: A prompting technique that helps an AI “plan” which tool to use and “reason” about the observation it gets back.

- Grounding: The process of ensuring an AI’s output is based on the data retrieved from its tools rather than its own internal “memory.”

Further Reading

- Function Calling and Other API Capabilities (OpenAI) — A technical guide from OpenAI on how their models are optimized for tool use.

- Anthropic: Tool Use (Function Calling) Guide — Practical examples and best practices for building tool-using agents with the Claude models.

- The Gorilla Project: Tool Use for Large Language Models — A research-focused project from UC Berkeley on how models can learn to use thousands of different APIs.

- Wikipedia: Intelligent Agent (Software) — A broad overview of the history of “agents” and “tools” in computer science and artificial intelligence.