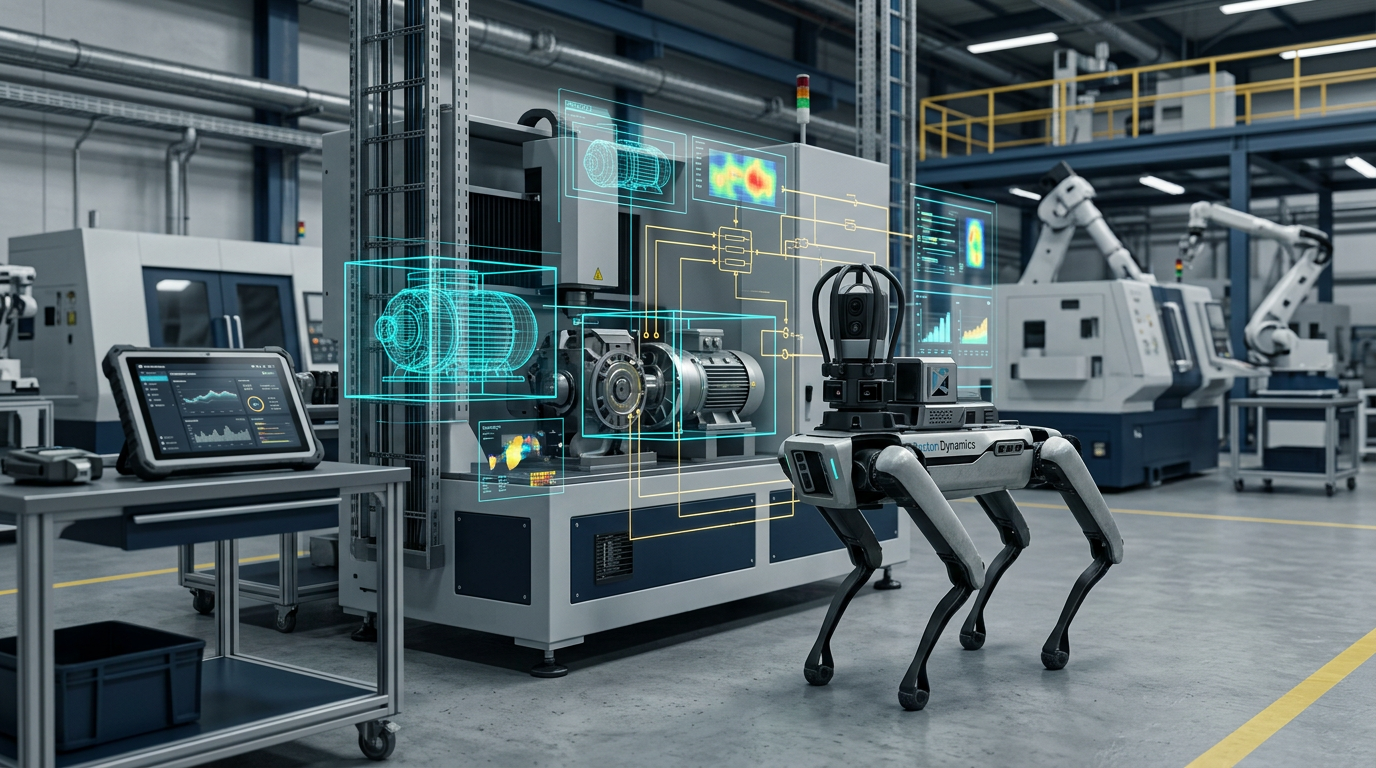

Boston Dynamics and Google DeepMind are moving industrial robotics deeper into the generative AI era. The two companies, alongside Google Cloud, have announced a partnership that will bring Gemini-powered reasoning into Boston Dynamics’ robot stack, starting with Spot and the AI visual inspection capabilities inside Orbit.

The significance is not just that another robot is getting a language model. It is that Google and Boston Dynamics are trying to make AI reasoning useful in the kind of industrial settings where visual ambiguity, complex asset environments, and long inspection cycles create real operational cost.

Gemini Moves Closer to the Factory Floor

At the center of the partnership is Gemini Robotics-ER 1.6, a robot-specific reasoning model that DeepMind introduced on April 14. DeepMind describes the model as a higher-level reasoning system with improved spatial understanding and multi-view interpretation, effectively acting as a more capable decision layer for physical agents operating in real environments.

Boston Dynamics says that intelligence will be applied to both Spot and Orbit’s AIVI inspection tools. In practical terms, the companies are aiming for more precise asset monitoring, stronger interpretation of industrial scenes, and more autonomous inspection workflows that improve over time as the system learns from live environments.

That is a meaningful enterprise step. Industrial inspection is one of the most defensible use cases for applied AI because the value is easier to measure: less downtime, earlier issue detection, more consistent monitoring, and a lower burden on human operators who would otherwise need to manually interpret large amounts of visual and operational data.

Why This Partnership Matters

The deal also shows how Google wants Gemini to compete outside the desktop and chatbot layer. Embedding Gemini into robots changes the conversation from productivity software to operational infrastructure. It is a bet that model reasoning can become part of the physical decision stack in warehouses, industrial plants, and other structured commercial environments.

Boston Dynamics is making a similar bet from the robotics side. The company has long had the machines, the mobility platform, and the inspection story. What AI offers is a way to push those systems beyond scripted recognition into more adaptive interpretation. Boston Dynamics put that case plainly in its announcement, arguing that industrial assets require more than basic object recognition and that Gemini can bring stronger conceptual understanding to the task.

This is also not the first step between the two companies. Earlier this year, Boston Dynamics said it would use Gemini models to improve Atlas, its humanoid robot platform. That earlier collaboration hinted at a deeper relationship. This latest deal makes the commercial direction clearer: Google wants its models inside real robots, and Boston Dynamics wants a reasoning layer that can help those robots operate more usefully in the field.

The Bigger Picture for Enterprise AI

The broader implication is that enterprise AI is continuing to move from office software into operational systems. As models improve in spatial reasoning and perception-heavy tasks, the next phase of enterprise adoption may be less about drafting documents and more about interpreting the physical world in environments where mistakes are expensive and context is messy.

Whether this partnership delivers on that promise will depend on reliability more than raw model capability. But as a market signal, it is an important one. Google DeepMind is no longer just talking about robotics as a research frontier. It is putting Gemini into industrial workflows where autonomy, inspection quality, and measurable ROI matter.

Comments

No comments yet. Be the first to share your thoughts.

Sign in or create an account to leave a comment.