Definition

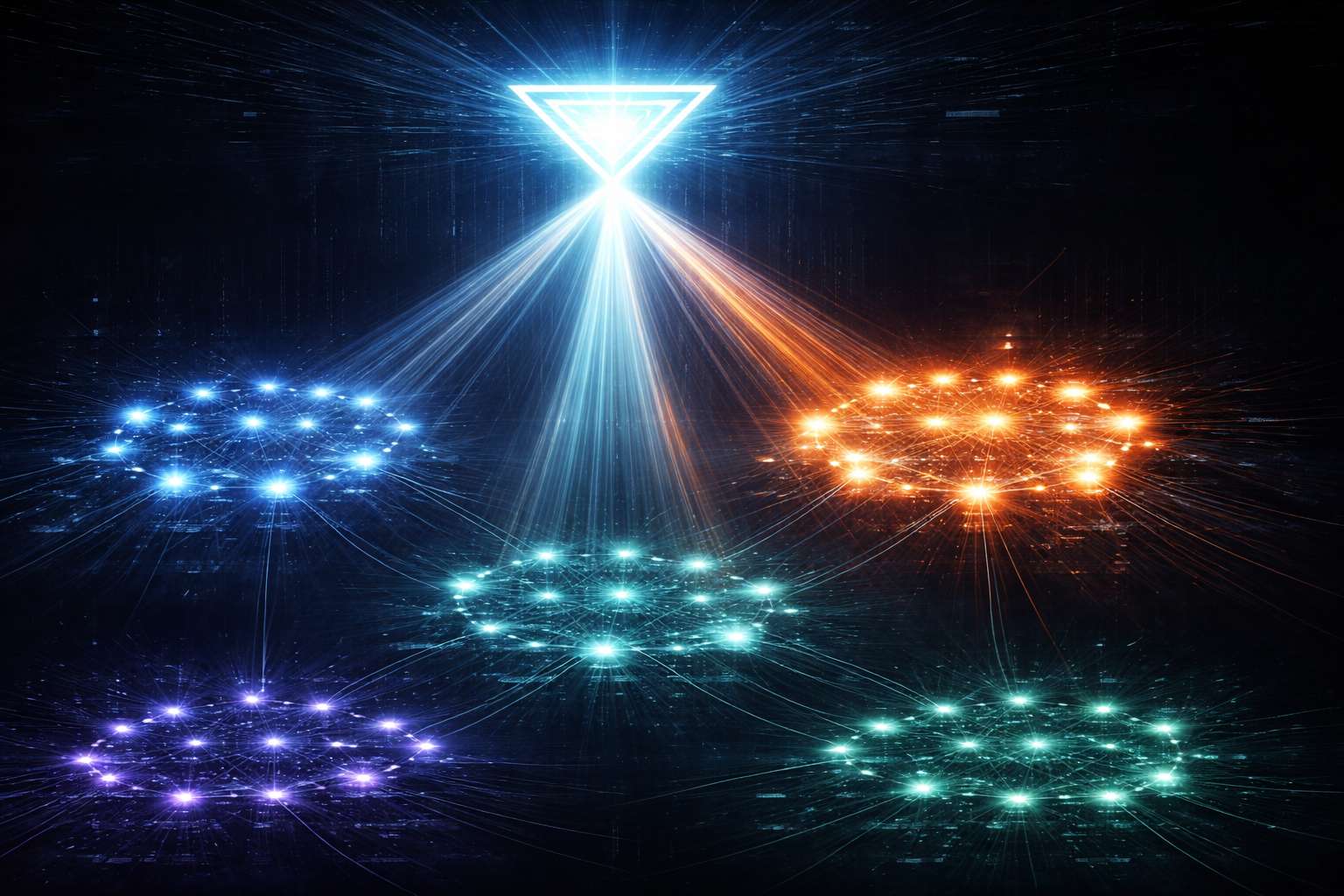

In the world of artificial intelligence, a “Mixture of Experts” (MoE) is a “Divide-and-Conquer” strategy for Large Language Models. A standard model like GPT-3 is a “Dense” network, meaning it uses every single one of its 175 billion internal parameters to predict every single word. This is like a hospital where every doctor—even the brain surgeons and the pediatricians—has to see every patient. A “Sparse” MoE model (like GPT-4 or Mixtral) is like a hospital with a Triage Nurse (the “Gating Network”). When you ask a question about “French History,” the gating network identifies that and only “activates” the “History Expert” and the “Language Expert,” leaving the “Math” and “Coding” experts to “sleep.” This allows a model to have 1 trillion parameters of total “knowledge” while only “using” 50 billion for any specific query, making it much faster and cheaper to run.

Why It Matters

The significance of MoE is its role in Efficient Scaling. As AI researchers try to build “Smarter” models, they usually have to add more “Parameters” (the AI’s internal neurons). But doubling the parameters usually doubles the cost of Inference. MoE breaks this relationship. It allows a company like OpenAI or Mistral to build a model with “Superhuman Knowledge” across hundreds of subjects while keeping the “Live Output” fast and affordable enough for the public to use.

For the industry, MoE is the “Secret Weapon” that has enabled several of the most powerful models of the last few years. It’s why a model like Mixtral 8x7B can perform as well as models ten times its “Dense” size. This “Sparse Computation” is critical for the future of AI, as it allows us to build ever-larger “World Brains” without requiring an infinite amount of electricity and high-end GPUs to power them.

How It Works

An MoE model works through a sophisticated “Routing” pipeline.

- Expert Layers: Instead of one giant neural network layer, the model has 8, 16, or even 128 smaller “Expert” layers.

- The Gating Network (The Router): For every incoming Token, a “Router” calculates which experts are the most “Qualified” to handle it.

- Active Parameters: The router typically picks the “Top-2” experts for each token. Only the math for those two experts is actually computed.

- Aggregation: The outputs from the two experts are then “blended” back together and passed to the next stage of the Transformer Architecture.

This “Selective Activation” is what allows the model to have a massive “Capacity” (total number of parameters) while maintaining a low “Compute Cost” (number of parameters used per word).

Applications

MoE is the foundation for several of the most famous Frontier AI Models. GPT-4 is widely believed to be an MoE model with 8 or 16 experts. This is what allows it to be so much more knowledgeable than GPT-3 while still being relatively fast to chat with.

In Open-Source AI, models like Mixtral have used MoE to “Punch above their weight class.” These models are small enough to run on a single consumer GPU while providing the performance of massive, $100 million “Dense” models. This has “Democratized” high-end AI, allowing small businesses and researchers to run specialized experts on their own local hardware without relying on expensive cloud APIs.

Limitations

The biggest challenge with MoE is “Training Complexity.” It is much harder to “Teach” an MoE model than a dense one. If the “Router” isn’t perfectly balanced, the model will “over-rely” on just one or two experts (the “Expert Collapse” problem), while the others never learn anything useful.

There is also the “Memory (VRAM) Requirement.” While an MoE model is “Fast” to run, it still has to “Load” all of its experts into a computer’s memory. This means a 1-trillion parameter MoE model requires a massive amount of VRAM, making it difficult to run on-device. Finally, “Expert Latency” is a factor; if different experts are stored on different GPUs, the “Coordination” between them can slow down the response time. Despite these hurdles, managing Inference costs is the top priority for any developer building modern AI applications.

Related Terms

- Transformer Architecture: The core design that MoE layers are added to for better scaling.

- Large Language Model (LLM): The conversational AI that uses MoE as its “Sparsity Layer.”

- Inference: The act of using a model to generate text, where MoE makes the process faster and cheaper.

- Pre-Training: The massive phase of learning that is much more difficult to manage for an MoE architectue.

- Tokenization: The process of breaking down text into the chunks that the “Router” then assigns to different experts.

- Model Distillation: A technique often used to “shrink” a massive MoE model into a smaller, dense one for use on mobile devices.

Further Reading

- Outrageously Large Neural Networks: The Sparsely-Gated Mixture-of-Experts Layer — The 2017 research paper from Google Brain that popularized the modern MoE framework.

- Mixtral of Experts: A New State-of-the-Art Open Model — An announcement of the first major open-source MoE model and its revolutionary performance.

- What is MoE? (Hugging Face Blog) — A clear, technical guide on how MoE is implemented in modern Transformer models.

- Wikipedia: Mixture of Experts — A comprehensive overview of the history, mathematical theory, and technical varieties of MoE in AI.