The premise sounds like a thought experiment from a conference panel: what if you replaced most of your engineering team with AI agents and ran them around the clock? For Vinay Pinnaka, co-founder and CTO of Mountain View-based fintech startup JustPaid, it stopped being a thought experiment about a month ago.

Seven Agents, One Engineering Team

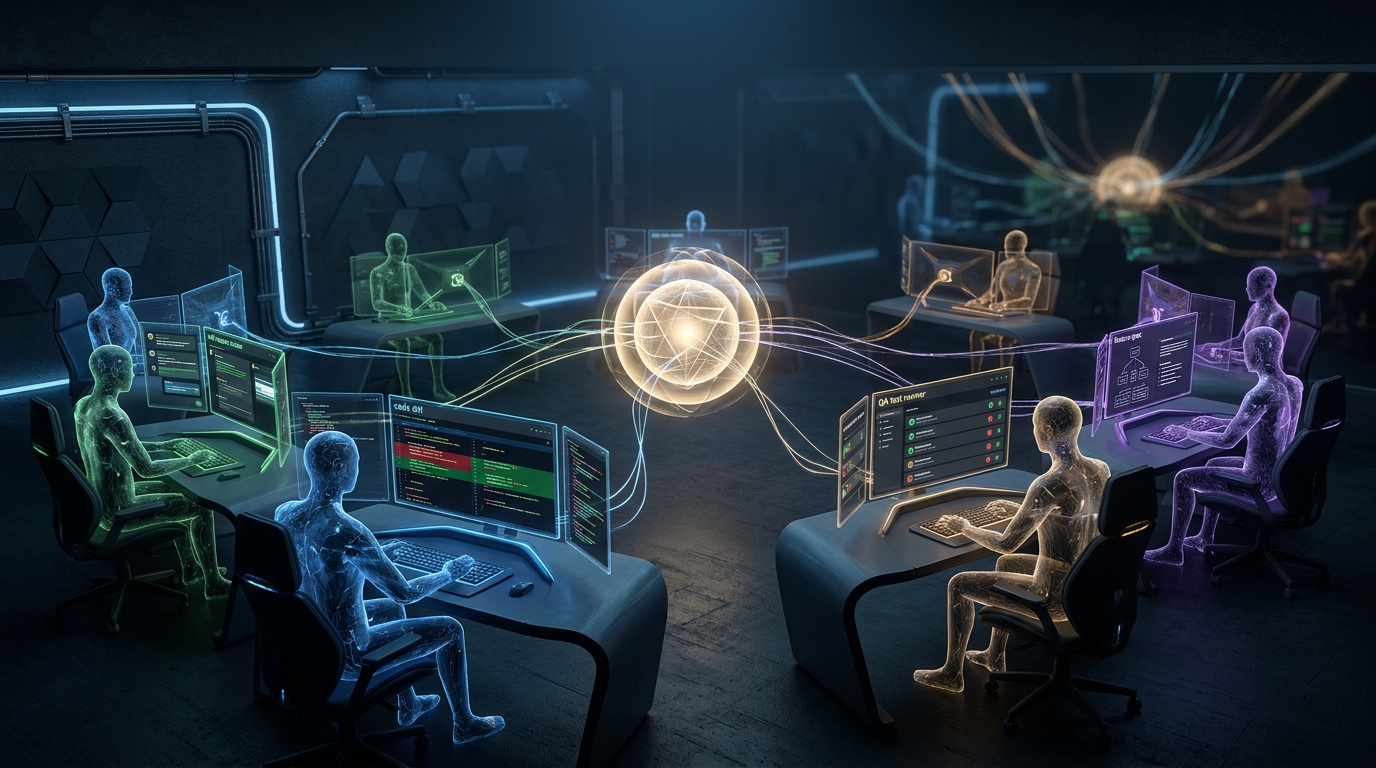

Pinnaka built a team of seven AI agents using a combination of OpenClaw — an open-source AI agent orchestration platform — and Anthropic’s Claude Code. Each agent has its own defined role and identity within the workflow: some write code, others review it, others handle quality assurance. Together they operate continuously, without standups, without vacation, and without the context-switching costs that slow human engineering teams.

In one month of operation, the agents have shipped 10 major features. Each of those features, Pinnaka says, would have taken his human developers a month or more to complete individually. Most recently, JustPaid hired a new human developer who was trained almost entirely by the AI agent team rather than by human colleagues — a signal of how deeply the AI layer has been woven into the company’s institutional knowledge transfer.

The Architecture: Brain and Hands

Pinnaka describes the system’s division of labor in straightforward terms. OpenClaw functions as “the brain that decides what needs to happen.” Claude Code is “the hands that do the coding work.” The relationship between the two reflects what makes agentic systems distinct from earlier AI coding tools: OpenClaw’s agents can plan multi-step tasks, spin up specialized subagents on demand, access files, and delegate subtasks autonomously — not just autocomplete lines of code.

The catalyst for building the system was the release of Anthropic’s Opus 4.6 model in early February, which Pinnaka says dramatically improved Claude Code’s output quality. When the OpenClaw platform began gaining traction across Silicon Valley shortly after, the architectural possibility became obvious: a dedicated orchestration layer on top of a high-quality code generation model, configured as a persistent engineering organization rather than a one-off coding assistant.

JustPaid Is Not Alone

Kuse, a startup that builds AI co-workers on behalf of enterprise clients, has gone even further with a similar stack. Its AI agent employees don’t just write code — they send Slack and Gmail messages, participate in Zoom meetings, and proactively initiate work without being prompted. “We didn’t remove anyone or stop hiring,” the company said, “but we have fewer reporting lines and fewer meetings.”

The pattern — AI agents operating with persistent identities embedded in communication and workflow tools rather than isolated in a coding environment — represents a meaningful evolution from the first generation of AI coding assistants.

The Costs Are Real

The economics of running autonomous agent teams are not trivial. When Pinnaka first began experimenting with the combination of Claude Code and OpenClaw, his weekly API bill reached $4,000. Token costs accumulate rapidly when agents are running multiple background tasks in parallel and making frequent model calls to plan, execute, verify, and delegate.

Through a series of adjustments — primarily switching to a smaller, more efficient Claude model for lower-stakes subtasks — he reduced the monthly cost to between $10,000 and $15,000. His conclusion on the trade-off: “Even if I’m spending the same amount of money on a Silicon Valley engineer versus AI, I’d still pick AI because it is able to work at a different scale.”

The Caution Layer

Not everyone is deploying OpenClaw without guardrails. Tatyana Mamut, co-founder and CEO of Wayfound — a startup that helps enterprises monitor AI agent behavior — said she is experimenting with the platform only in a strictly controlled, isolated environment with no access to production business data. “OpenClaw and other agents that are left to their own devices to make decisions need to be supervised all the time,” Mamut said.

The concern is concrete: agents with broad system access can tamper with or delete files when they go off-script. That risk profile is why large enterprises have largely stayed on the sidelines, and why companies like Nvidia have invested in enterprise-grade agent safety tooling designed to constrain what autonomous agents can actually do.

What Analysts Are Watching

Arun Chandrasekaran, an AI-focused analyst at Gartner, frames the shift in terms of organizational structure rather than individual job displacement. Large engineering teams segmented by function — frontend, backend, QA, DevOps — could compress significantly as AI coding tools improve. “They’re starting to wonder, ‘How does this fundamentally change the way we do software development?’” he said.

Pinnaka’s own answer to that question is a manager-led model: as AI agents take over execution, human engineers shift into oversight and direction roles. “As we automate every single task, there should be a manager,” he said. His human engineers still have jobs — they are just no longer the ones writing the code.

The more distant horizon Pinnaka describes is further out: “Once AI gets to the stage where it is able to handle human empathy, I would be able to say, ‘I can replace everyone with AI.’” For now, the empathy layer — and the customer relationships that depend on it — remains a human responsibility.

Comments

No comments yet. Be the first to share your thoughts.

Sign in or create an account to leave a comment.